You start in SEO and want to rank your website in Google?

This is the complete guide to start your own SEO strategy in 2021.

You’ll find in this post everything you need to get you started with your organic traffic strategy.

Note to the reader: I usually write about stuff thousand times more technical, but I wrote this guide to help clients and colleagues understand SEO. If you already know SEO. You will not learn much here.

How to do SEO in a Nutshell

Before we get into lengthy detail, SEO doesn’t need to be very complex. Here are the steps to optimise your website for SEO.

- Find the main subject that you want to talk about. – ex: SEO

- Split the subject into subcategories. – ex: SEO basics, Copywriting, Technical SEO, …

- Inspect the Google Search Results to see what content you should produce for the category. For example, SEO basics have tons of ads. In that case, write about a different/more granular subject, or buy Google Ads instead of doing SEO.

- Find the user’s search intent (what they are expecting when searching for something). Look at the search results. If the first result is a video, do a video; if it is a guide, write a guide; if it is a Google Map listing, get on Google My Business, and so on.

- Build content that is not only better and more useful than the first results, but that is better than anything on page 1.

- Share your content as much as possible. You want to build links to your site. Share on social media, try to get people adding a link to your article on their site, etc.

- Improve the user experience. Make sure your site is mobile-friendly and fast enough not to annoy your user. Try reducing the bounce rate or the time spent on your site on each session.

That’s it. There is a lot more that can be done, but the underlying principle of any basic SEO strategy is all defined in the 7 points above.

Now, don’t leave before reading this.

If you plan in investing in SEO, don’t do it heads down like it is the holy grail.

Additional SEO Resources

This guide covers the basics, but if you want to become an SEO, you will need to commit to learning from the community. Aleyda Solis has built a fantastic site to help people learn SEO. learningseo.io.

Cold Hard Truth on SEO

Before beginners start to invest in SEO, consider this:

SEO is Expensive

SEO takes a lot of effort and time. If people don’t search much for your products, SEO might not be a good investment.

People might not search for your product

If you are in a very small niche that nobody searches about, SEO should probably not be your first strategy.

Beware of SEO Consultants and Agencies

Consultants and agencies are risky and expensive. But, they are all we have when we can’t hire someone.

Bear in mind:

- A lot of agencies are very good.

- A lot of SEO agencies don’t know what they do.

I think you can gain value by working in relation to an agency, but you have to be careful.

Here is what you can do to make sure that you have a good agency.

- Start with small projects

- Have someone to double-check big project recommendations

- Get some business references

- Beware of link schemes

- Have an internal employee to implement some of the bigger tasks that don’t need technical knowledge

(If you are an SEO agency and want to contest this. Send me an email, I’ll talk to you, and if you are good, I will refer you)

Some Industries are too Competitive for Small Players

If you are a very small player in a hyper-competitive industry (like selling shoes, or booking hotel), you should probably hire SEOs that know what they are doing. It is going to be an expensive quest.

If you do, don’t try to rank for the highest volume keywords, but target long-tail.

Here some of those industries.

- Porn

- Jobs

- Hotel

- Plane Tickets

- Social Medias

- Gambling

- E-commerce on high volume products (shoes, cell-phones, etc.)

What is SEO?

SEO, or search engine optimization, is the process of getting traffic from the “free”, “organic”, or “natural” results on search engines.

An SEO (job title) analyzes and interprets data in order to develop strategies that will increase organic traffic over time.

SEO Definitions

Index

The Google index is the place where Google stores all web pages that it knows. Each page is defined by a URL and is stored like it would be in a giant library, Being indexed doesn’t mean being shown. A web page can be indexed and never be presented in the SERPs.

However, you need to be sure that your page is indexed because A URL not indexed will never be shown to the user.

To know if your site is indexed, do a site: search for your home page, like this, “site:jcchouinard.com“.

Crawl

Crawling is the process by which Google finds new and updated web pages. Google crawls your website by following hyperlinks. Google can crawl your page and decide not to index it.

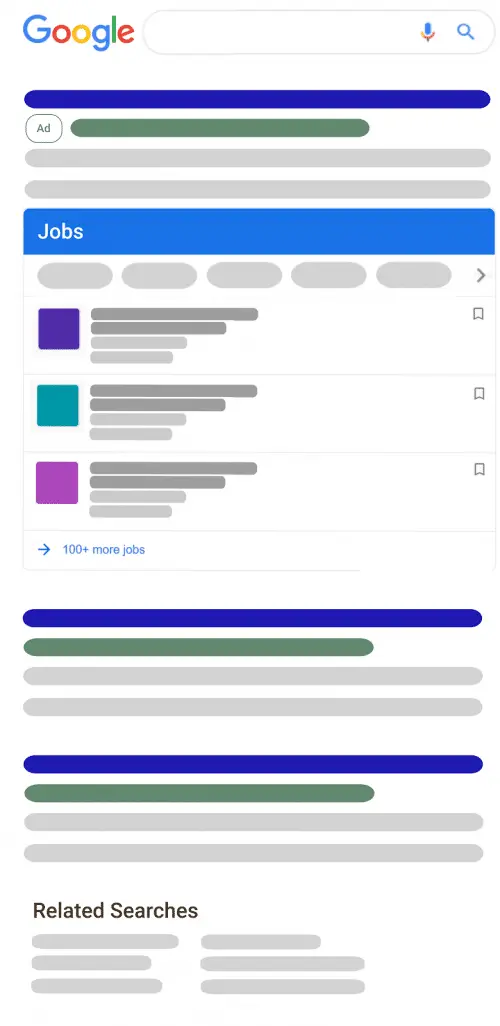

SERPs (Search Engine Result Page)

SERP, or Search Engine Result Page, represents a web page delivered to the users after they have searched for something online.

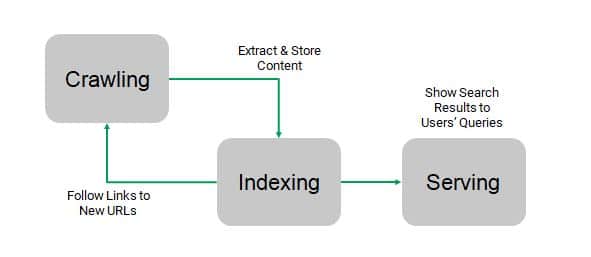

How Google Sees Your Site: Crawling, Rendering, Indexing and Serving

In this part, I will give you a basic understanding of Google Crawling, Rendering, Indexing, and Serving processes. You can learn more by reading my post on how Google renders a website.

You can also watch Google’s presentation at Google I/O 2018.

Crawling

What is a crawler? A Web crawler, sometimes called a spider, is an Internet bot that systematically browses the World Wide Web following links.

Basically, it looks at the skeleton of a website.

Google’s web crawler is called Googlebot. Here how the crawler works.

- Googlebot finds a link;

- Extracts all links found in that page’s HTML;

- Follows those links;

- Sends all those links for the indexer to process

Rendering

A crawler follows links and extracts information from what it can extract from the DOM.

However, the crawler can’t perform actions, such as filling and submitting a form.

Hence, there is information that it can’t extract directly from the DOM.

Any information that has to be executed with a JavaScript file will need to be rendered before it can be processed.

Rendering is like loading a page to view the content as an end-user would. This way, it can find additional information that can be sent to the crawler and the indexer.

Let’s use the analogy of the human body.

- A crawler looks at the skeleton, but it would need to be rendered for you to be able to see a face.

- A renderer looks at the skin of a website.

Indexing

You can view Google’s index as a big library where it has all web pages stored on virtual shelves. Google keeps its index fresh by crawling the web and updating its index based on multiple factors (noindex, 404s, etc.).

Google’s process of indexing goes a bit like this:

- Google receives a URL from the crawl;

- Google Indexer decides to keep (or not) the URL received;

- The pages that are kept are sent to the Index;

- Google gives a ranking to those pages.

Ranking

Ranking (or Serving) is the part where Google shows results from its index to match the query of the user.

- Google receives a query from a user;

- Search in its index for the most relevant pages;

- Send the top 10 results to the user;

- Use RankBrain to understand user interaction to provide better ranking later.

A rule of thumb to rank better, a website should:

- be secure, fast and easy to use.

- A web page needs to answer user intent better than anyone.

- A web page should be linked to.

User Experience is the ultimate goal.

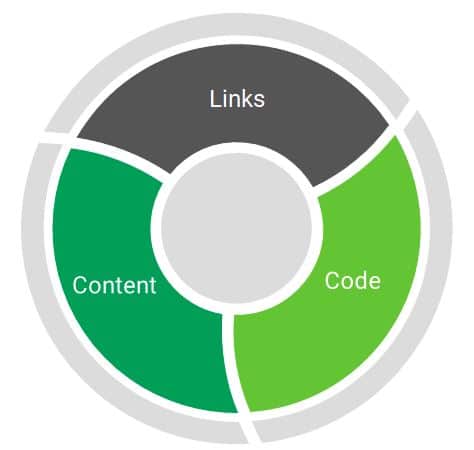

3 Fundamentals of SEO

The 3 major things that drive Search Engine Optimization are:

- Links

- Content

- Quality of your code (technical SEO)

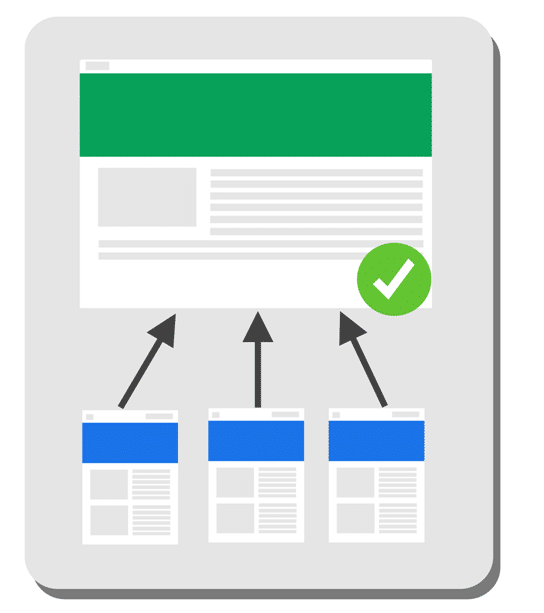

Links

Google knows the Authority of your page by looking at the number and the quality of the links pointing to it.

Type of Links: Internal and External

There are internal links and external links.

Internal links are all the links that refer to another page on the same website as themselves.

jcchouinard.com/page-a > href > jcchouinard/page-b

External links, also called backlinks, are all the links that refer to another page on a different domain.

jcchouinard.com/page-a > href > moz.com

All of those links help to increase the “authority” of a web page.

Not all links are created equal

Some links have a greater SEO value than others.

- An external link (backlink) has a greater SEO value than an internal link.

- The more links a web page has pointing to it, the greater the value it has.

- The more relevant a link is, the greater the value it has.

Basically, links coming from big trustable websites in your niche are better than links from smaller shady ones in a niche with no relation to yours.

Type of Links

Not all links have an SEO value. Some are not indexed in Google. Some can’t be crawled. Some will never be crawled again.

Links using the rel=”nofollow” tag, or links on pages that have a noindex meta robot tag have no SEO value.

Here are a few types of links that can be found across the web.

<a href="/good-link">Crawled, SEO Value</a>

<a href="/nofollow-link" rel="nofollow">Crawled, No SEO value</a>

<a href="/bad-link#fragment">Fragments are hard for bots</a>

<span onclick="changePage("bad-link")">Not crawled</span>

Content

The quality of your content is absolutely critical for SEO.

Not only the quality of your content but also what you write about and how you write about it.

4 major things to consider when producing new content:

- Search Volume (what people are searching);

- Competition (who is ranking for the targeted keyword);

- Searcher’s intent (what people want to read);

- Searcher’s satisfaction (how people interact with your content).

Search volume & Competition

This point is really important to know if it is worth it to do SEO in your industry.

If no-one searches about your product, you need to talk about something else to reach your audience.

If you are not ready to pay to find the search volume for your terms, you can use Google Ads Keyword Planner, Keywords Everywhere and keywordtool.io.

There are also multiple of paid tools to help you find the search volume for your terms: SEMRush, Ahrefs, Moz. However, those can be expensive, and not so adapted for beginner SEOs.

What you want to do is find keywords with high search volume and low competition.

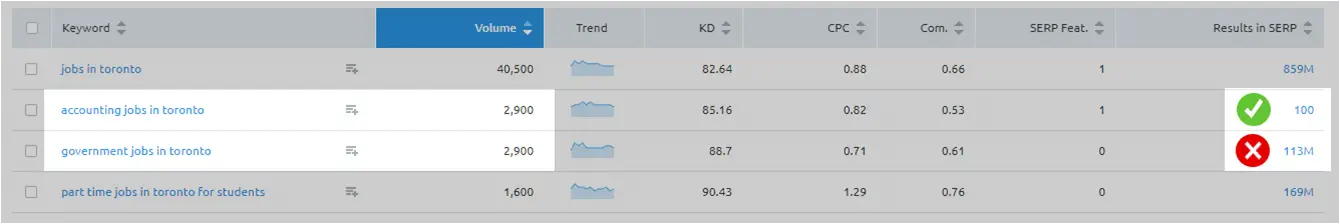

In this example, you see that for the same search volume, you have more competitors for the term government jobs in Toronto than for accounting jobs in Toronto.

Now you know that you want to target accounting jobs in Toronto before trying to target government jobs in Toronto.

Once you know this, the rule of thumb is:

- If the page doesn’t exist: Create it

- If the page exists and doesn’t rank: Make it better

- If the page exists, is better than competitors’ but doesn’t rank: Get Backlinks

Searcher’s Intent

Being able to understand the searcher’s intent behind a search query is critical to optimize your website for better rankings.

You want to know what kind of content people expect when they search for a specific query.

How to know what is the intent behind a query?

Look at the SERPs.

Google tries to show the best content for each query.

Example.

If Google shows a video in position 1. It means that people usually click on video results for that query.

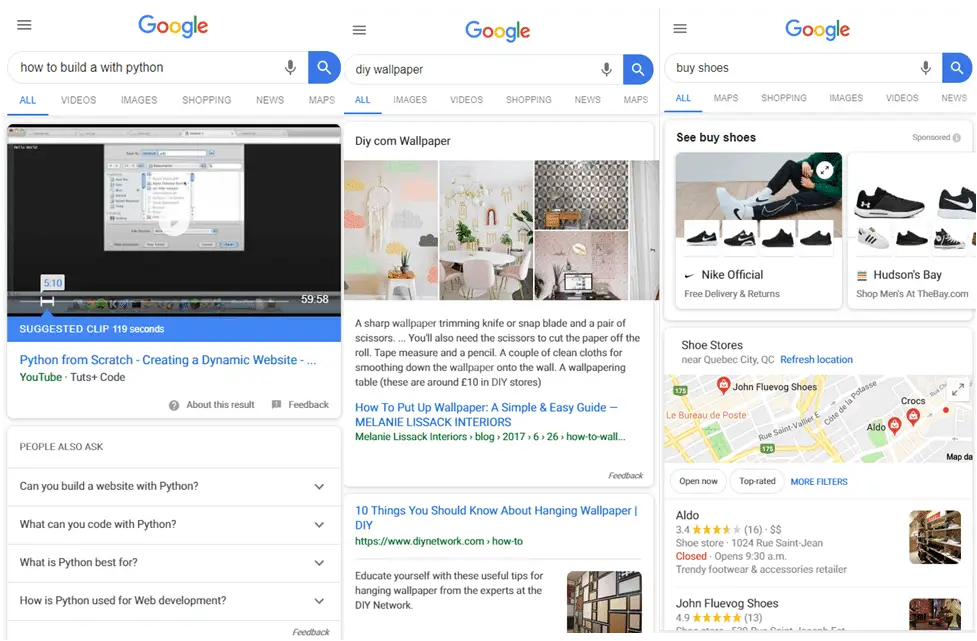

Take those three queries.

Can you spot the intent behind each of those queries?

In the first, people want video tutorials;

The second, they want to see images of wallpapers;

In the third, they want to buy products. You can see this easily with the amount of Sponsored Google Shopping, but also with the Google Map box that usually means that people try to find places where to get a product of service.

What does it mean?

It means that if you don’t produce a video about “how to build a python website”, you have next to no chance to rank number#1 for that query on Google.

Searcher’s satisfaction

As SEOs, it is crucial that you learn how to deliver the content that people want in the format that they want it.

But, wait!

We’re not done!

You need to produce content that users will actually like.

How can you Measure Searcher’s Satisfaction?

There are many ways that you can measure searcher’s satisfaction.

You can look in Google Analytics and Google Search Console for these metrics:

- Bounce Rate

- Time on site

- CTR

- Pogo sticking

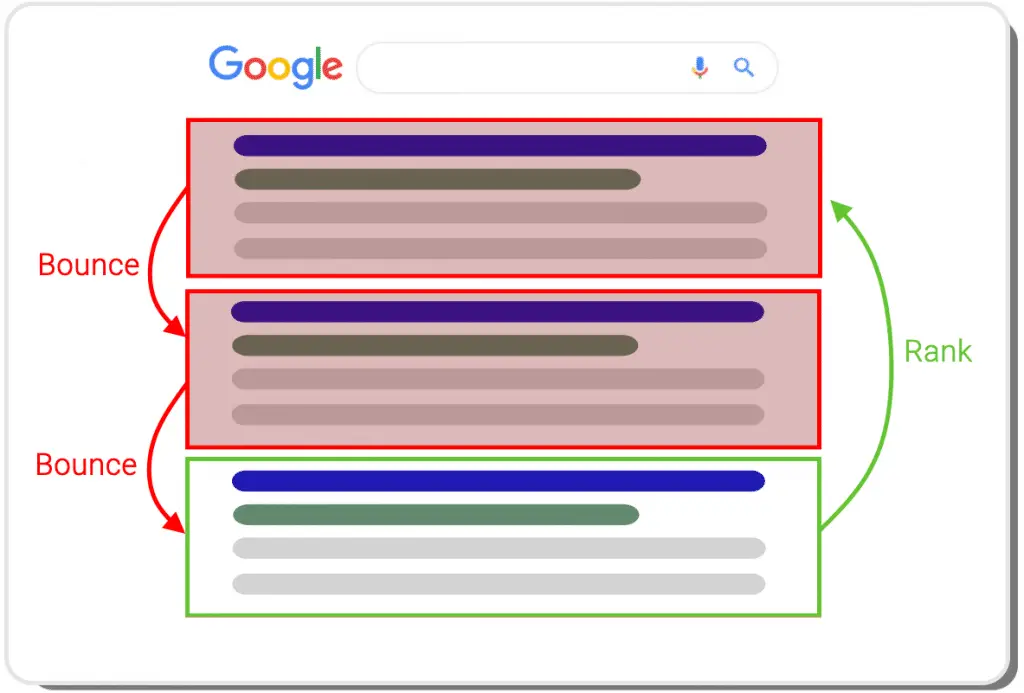

What is Pogostiking?

What you want to watch out for is what we call Pogosticking.

If everyone that comes to your page leave and searches for other results, it is a strong indicator to Google that users are not happy with the content they were served.

What Rank Brain will do is to try your content up for a few days and “look” at the user’s reaction to your content. If they leave your site and click on another link, this link will be favored over yours later on.

This one is a little far fetch. Most likely, Google is only tracking Long Clicks (if user returns to the SERP or not) and CTR.

Quality of Your Code (Technical SEO)

Having a website that is well developed is good for SEO.

It is crucial for your website to be fast, mobile-friendly and secured.

Just understand this.

The quality of your content is more important than the quality of your code.

You could rank well without even knowing a single thing about what is coming up.

However, it is crucial for your website to be fast, mobile-friendly and secured (with HTTPS).

This being said, let’s cover the basics that you need to learn SEO.

- Meta Tags

- Site structure (h1-h6)

- Hyperlinks

- Images

- Meta Robot + Robots.txt

- Sitemaps

- HTTPs

- Mobile Friendliness

- Pagespeed

- Structured Data

Meta Tags

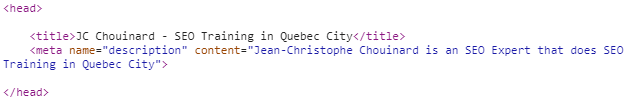

Meta tags are HTML snippets that describe the content of a page. They consist of there parts: the title, the meta description, and the meta keywords. These snippets are not visible to the user in the page itself, but only in the code of the page.

The Search Engine (e.g. Google) use the Title and Meta Tags as indications of how you want your content to be described in the results delivered to the user in the Search Engine Result Page (SERP).

Meta Tags Best Practices

- Meta title: Should be less than 77 characters

- Meta description: Between 150 and 300 characters

Site structure (h1-h6)

H1 to H6 are HTML bits of code that are used in HTML to define titles.

You want to make sure that your site’s structure is clear, coherent and contains your keywords.

Rule of thumb:

- Only one h1 per page

- Targeted keywords and variations should be in your titles

- The structure should be coherent

- No empty tags

Hyperlinks

A hyperlink is one of the most important things to understand when doing SEO. Google uses links to crawl the web. He also uses them as an indicator of the importance of a page.

Anchor Text

The anchor text is the clickable text of the hyperlink.

HTML Code Example

<a href="https://www.jobillico.com">Visit Jobillico.com</a>

The example above will look like this: Visit Jobillico.com. “Visit Jobillico.com” being the anchor text.

Images

Images should have a reasonable size and be compressed so they can load fast.

Images should follow three easy rules:

- Should be compressed

- Should have relevant names

- Should have Alt attributes

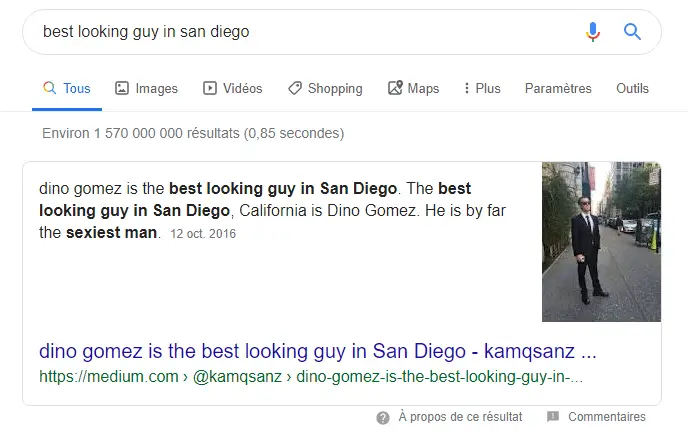

There are many other rules. If you want to learn how to rank better in image search, I suggest that you read Dino Gomez‘s post that shows how he ranked for the term “best looking guy in San Diego”. ?

Image Compression

There are multiple tips to optimize your images.

- Use small-format images. My favorite size is

755x426since it works easy on social media and isn’t too large to make rendering slow. - Use Lazy-loading. It will render the images only when needed, making your website much faster.

- Use Webp images in chrome

- Use Attribute srcset

Relevant Names

They should have a relevant name.

Google uses the names of the image files to shows result in its Google Image Search Engine.

I bet, RBC would prefer to find its logo in Google Images under the query “RBC” instead of the query “img57349034”.

- img57349034.jpg

- logo-rbc-2020.jpg

Alt Attributes

You can also add Alt Tags.

Alt Tags are useful for the blind and visually impaired, a preview when an image doesn’t load.

Meta Robot + Robots.txt

Meta Robots and Robots.txt are tools that you can use to control what google crawl and indexes.

Meta Robots are indications that you give Googlebot or any other bot to tell them what to do with your page.

Index, Noindex: Tells a search engine to Index or not the pageFollow, Nofollow: Tells crawler to follow (or not follow) the links in the page- Other indications:

noimageindex, noarchive, nocache, nosnippet, noodyp/noydir(obsolete), unavailable_after.

Robots.txt is a document placed on your root (jcchouinard.com/robots.txt) with a set of indications that the crawler will respect.

Make sure that YOU NEVER add this indication to your robots.txt. Disallow: /. This line tells all robots not to crawl your entire website.

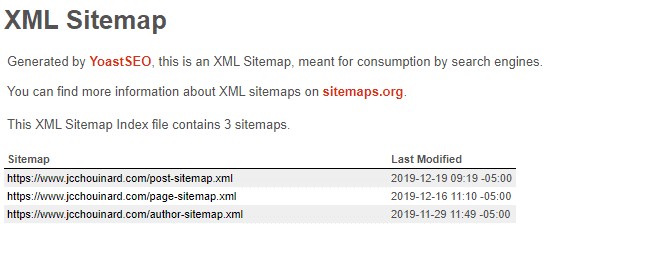

Sitemaps

There are multiple kinds of sitemaps that you can create: XML Sitemap, Video Sitemap, Image Sitemap, Geo Sitemap, News Sitemap Jobs Sitemap.

Here we will focus on the sitemap.xml.

The sitemap.xml is a file that you add to the root of your that contain all your important URLs.

Search engines like Google, Yahoo, and Bing use your sitemap to find pages on your site.

Google can crawl your site without a sitemap, but sitemap help crawlers to discover pages that are not properly linked within your website.

If you use a plugin like Yoast on your blog, you already have an XML sitemap.

You can check by typing.

yoursite.com/sitemap.xml

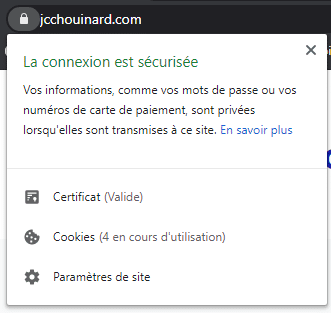

HTTPs

Non-HTTPs sites are labeled non-secure. This can be a major security (and SEO) issue.

Even if you have an HTTPS website, you might still have a few issues.

Find Indexed HTTP Pages

Find pages still indexed in HTTP using advanced search operators site: and -inurl: in Google.

site:example.com -inurl:https

If there are a lot of pages indexed in Google using this advanced query, you should work on this. This is a sign that you have links to the non-https version of the page.

To fix this issue. Redirect HTTP to HTTPs in your .htaccess file (WordPress).

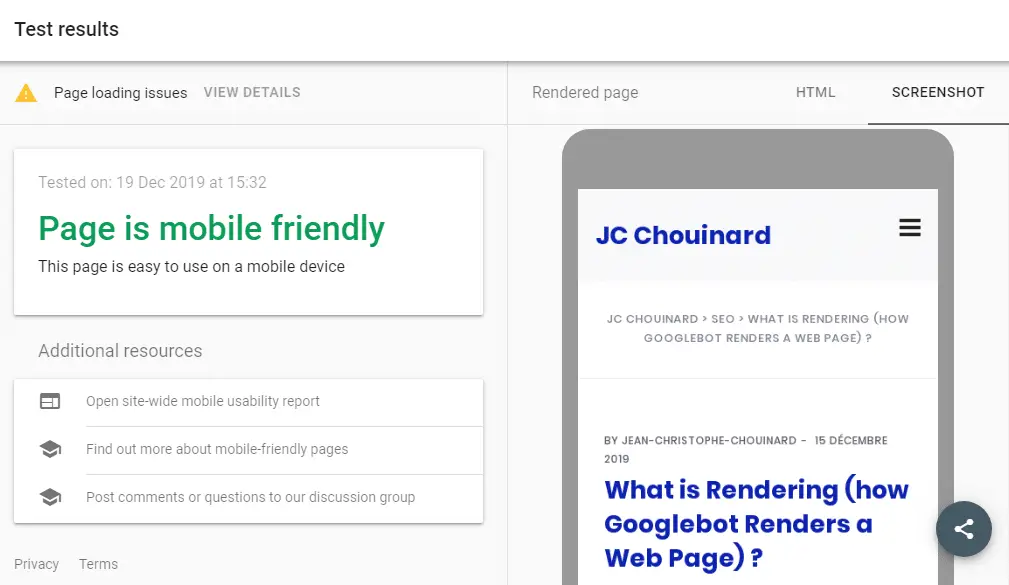

Mobile Friendliness

This is crucial and one of the very first things that you need to do if it is not already done.

You can use the Google Mobile-Friendly testing tool to see if your website renders well.

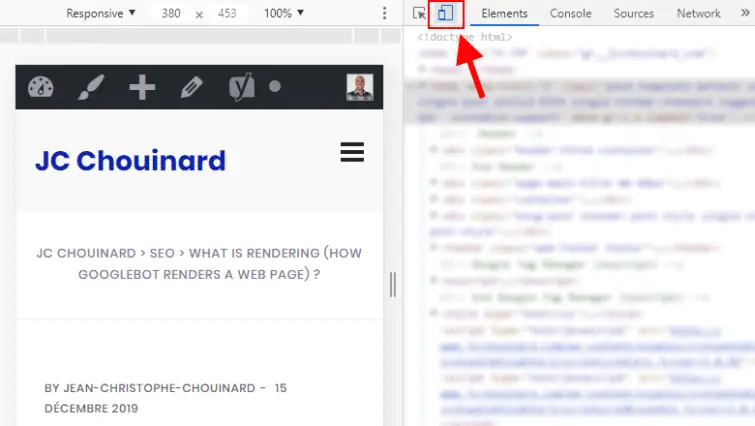

Or, you can also use the built-in function in Chrome.

Click F12.

Click on this icon to switch to mobile.

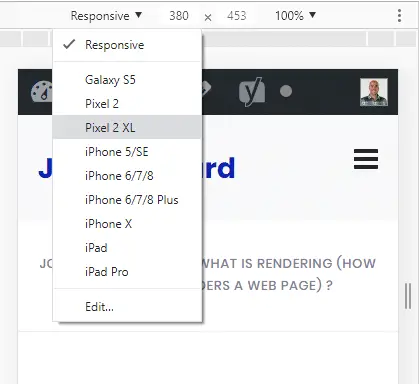

You can select the type of device that you want to replicate.

There is not much else to say on the subject. If you don’t know how to make a responsive website, hire someone to do it for you.

Pagespeed

Pagespeed is one big, massive block of SEO, and not an easy one. It is very important though.

Your website needs to load fast, and not only for SEO.

If it doesn’t, people leave your site.

If people leave your site, you lose your most important asset.

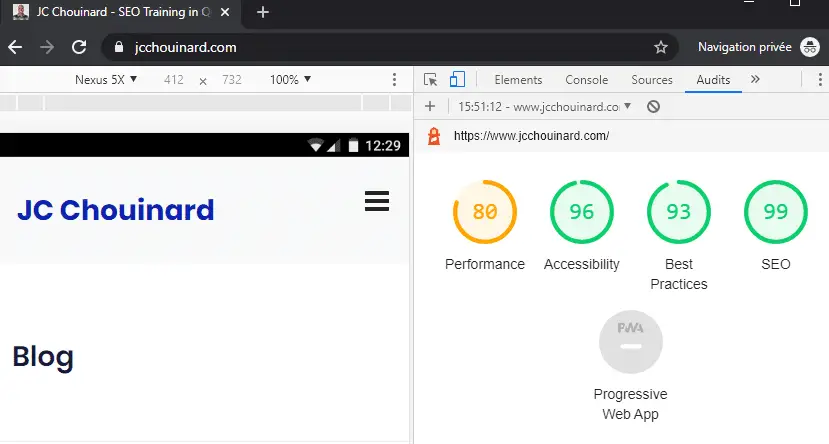

You can test your website using Google Lighthouse in DevTools.

- Make sure that you don’t have extensions running in your browser (you can run it in private mode);

- Press

F12; - Click on “Audits”;

- Run the audit.

If you prefer, you can also test your site using GTmetrix or Google PageSpeed tool.

My favorite extensions to optimize a WordPress site are:

- Autoptimize

- BJ Lazy Load

- W3 Total Cache

Structured Data

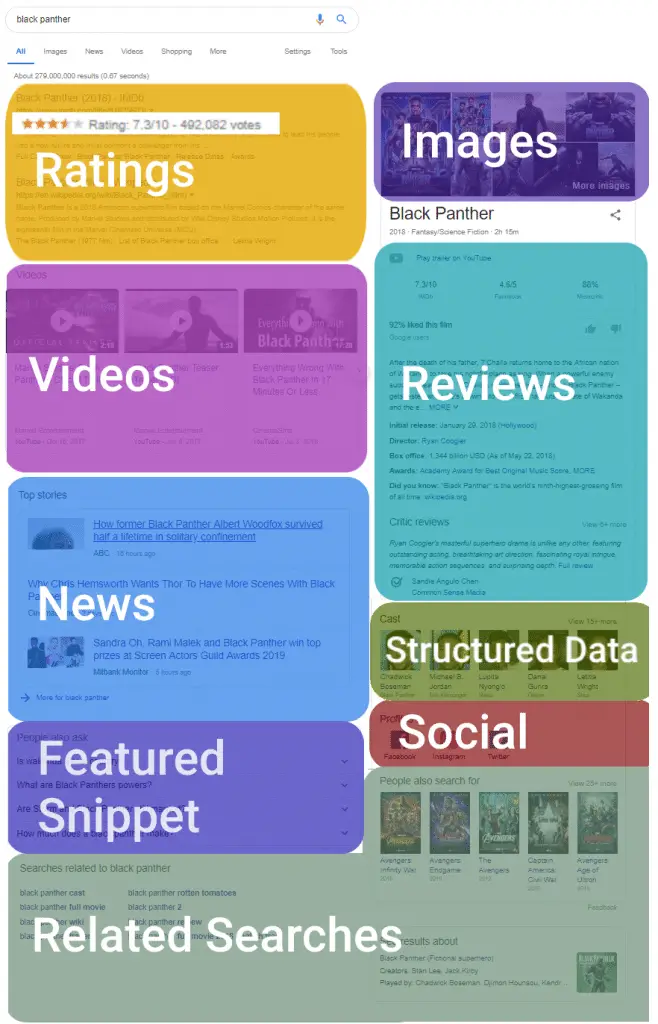

SEO has evolved.

We’re now far from the historical 10 blue links. A lot of these changes are powered via Structure Data.

Structured data is bits of code that tells what the content of your page is all about, in a structured way.

Most snippets in Google can be influenced using Structured Data.

- Google Jobs

- Recipe

- News

- Social

- Reviews

- Breadcrumbs

- And much, much more

Structured data doesn’t really help you rank higher, it just tells Google what is content is about. You could rank featured snippets without structured data, but chances are that Google will pass over.

To test structured Data, use the Google Structured Data Testing Tool.

Interesting SEO Strategies

All that you have read in this guide is just an overview of the basics.

By doing all of the above, you will not automatically rank.

SEO is a zero-sum game with a lot of players, with a lot of different strategies.

Here, I provide you with some of the interesting strategies that I have read in the last 12 months.

High-Level Strategies

Aleyda Solis is one of my favourite SEOs. Definitely have a look to her crawling Mondays sessions on Youtube.

But also this insightful article:

Beyond Trends: 4 Key Activities To Maximize and 3 Aspects To Stop For Your 2021 SEO Success

E-A-T

Marie Haynes has wrote the best post ever on E-A-T for SEO.

Read the Google’s Search Quality Rater Guideline, or the overview of the Search Quality 175 pages.

Topic Clusters

Samuel Schmitt case study on topic clusters. Why this one?

Because I hate long-form content like the article you are currently reading.

They are not efficient for a content writer and it forces you to write a lot of the same stuff from one article to the other.

Topic cluster is the strategy that I have used on my blog on Python for SEO and I manage to outrank Moz, Search Engine Journal and Python.org with a blog that had zero backlinks.

Copywriting

For content writers, you have to read Brian Dean guide on Copywriting. I don’t agree with all, but I have tried and tested many of his advice with a lot of success.

User Intent

This is a beginner guide. Of course I have to talk about Moz and User-intent.

If you don;’t know what I am talking about you have to understand that concept to do SEO.

Read Rand Fishkin’s White Board Friday on User Intent.

Semantic SEO

Now, we are going into the complex world of semantic SEO. In a nutshell, answer all the possible questions surrounding a subject, and grow your SEO that way. For more details, I suggest that you follow Koray Tuğberk GÜBÜR and read his guide on semantic SEO.

Conclusion

Here it is, hopefully you enjoyed this post.

This is the end of my beginner guide to SEO.

SEO Strategist at Tripadvisor, ex- Seek (Melbourne, Australia). Specialized in technical SEO. Writer in Python, Information Retrieval, SEO and machine learning. Guest author at SearchEngineJournal, SearchEngineLand and OnCrawl.