In this tutorial, you will learn how to use Google Analytics Data API (GA4) with Python.

(simple step-by-step guide)

This tutorial is an update from our previous guide on how to use the previous version of the Analytics API: Google Analytics Reporting API.

How to Use GA4 Analytics Data API with Python?

To use the GA4 Analytics Data API with Python, you will need to:

- Create a project in Google Cloud Console,

- Get Credentials From Google Analytics Data API,

- Install Required Python Libraries

- Connect to the Google Analytics Data API with Python

- Make a Request to the GA4 API with Python

1. Create a project in Google Cloud Console

To create a project in Google API, sign-in to Google Cloud Console, click on Select a project and New Project.

2. Get Credentials From Google Analytics Data API

To get your credentials from the Google Analytics Data API, you need to set-up OAuth2 credentials in Google Cloud Console. These credentials will act as your username and password for your application allowing you to authenticate the GA4 Google Analytics Data API.

This is an overview of how to get your JSON credentials file here, for more detailed steps, check out how get connected to Google APIs.

Follow these steps to get your credentials from Google Cloud Console:

- Activate the Google Analytics Data API

- Create Your Credentials

- Download the Credentials

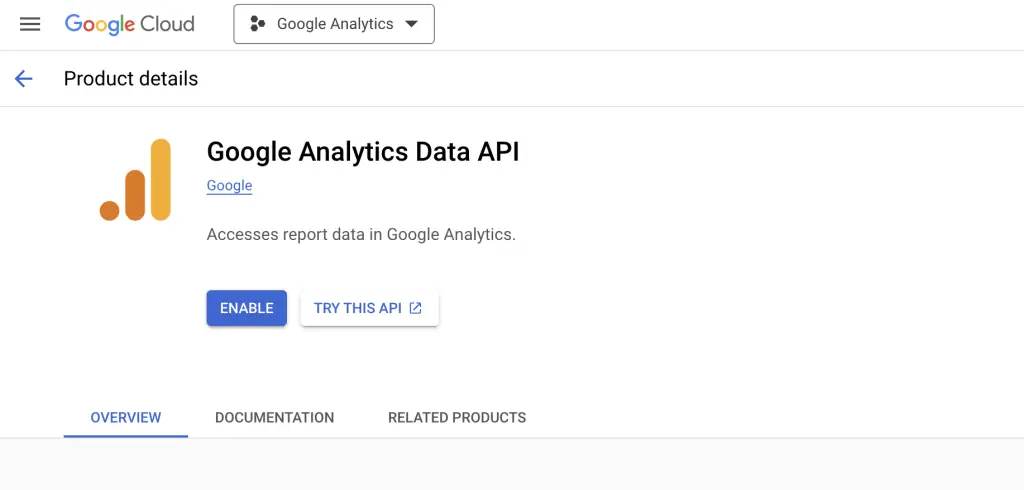

Activate the Google Analytics Data API

To activate the Google Analytics Data API, go to the library on the left panel and search for “Google Analytics Data API”. Click on Enable.

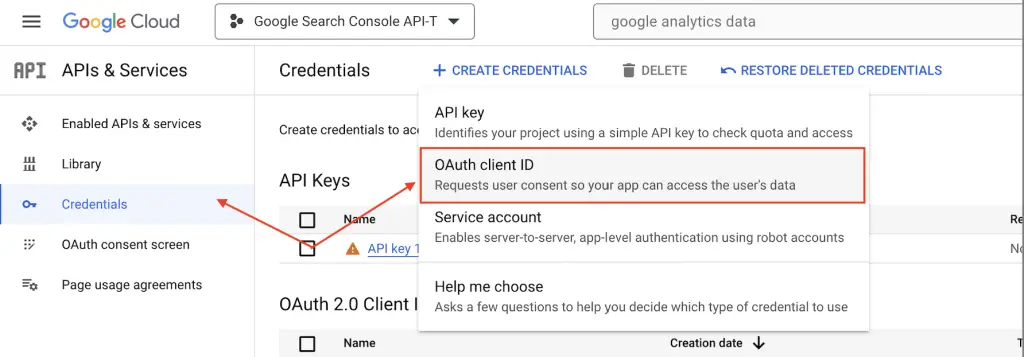

Create Your Credentials

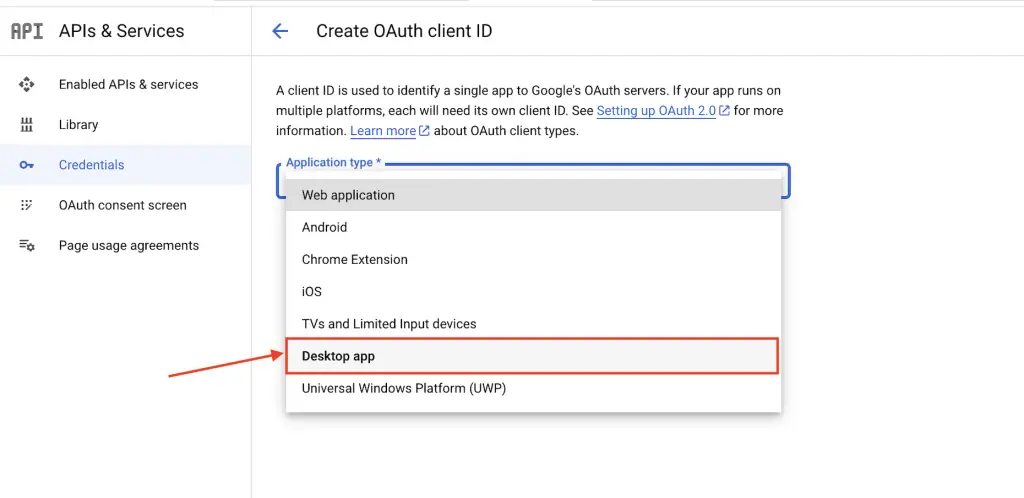

To create the credentials, go to the credentials page, click create credentials and select OAuth client ID.

Then, select Desktop App

Download Your Credentials

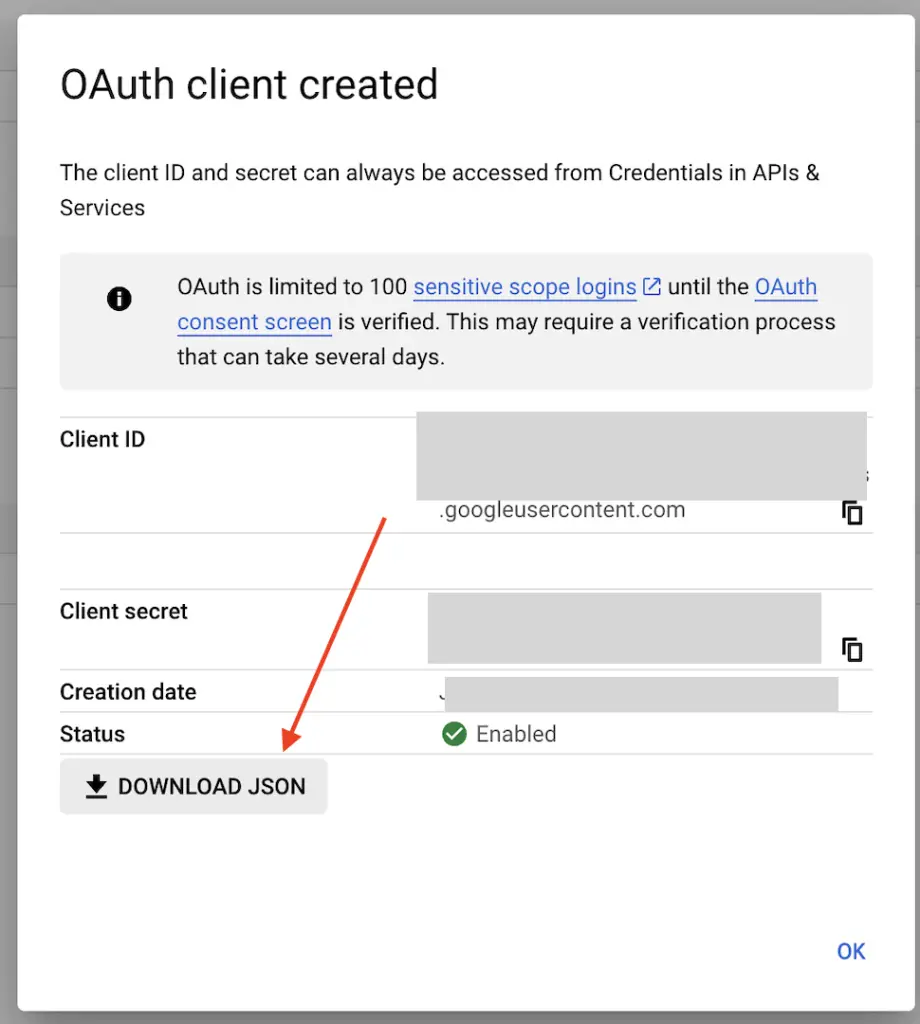

To download your credentials, click on Download JSON

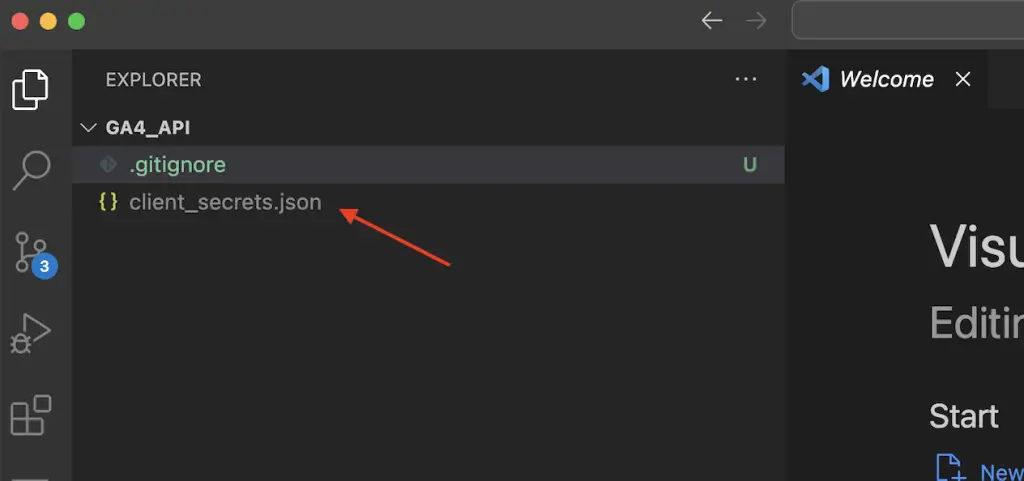

Store the output in a client_secrets.json file inside your project.

3. Install Required Python Libraries

To use the new GA4 Analytics Data API in Python you will need to install the following libraries. To do so, open your Terminal and type:

$ pip3 install --upgrade google-auth-oauthlib

$ pip3 install --upgrade google-analytics-data4. Connect to the Google Analytics Data API with Python

To connect to the Google Analytics Data API with Python, use the get_credentials() function from the following Python code.

# connect_to_ga4_api.py

from google.auth.transport.requests import Request

from google_auth_oauthlib import flow

from google.oauth2.credentials import Credentials

import os

client_secrets = 'client_secrets.json'

def get_credentials(client_secrets=client_secrets):

"""Creates an OAuth2 credentials instance."""

creds = None

scopes = ["https://www.googleapis.com/auth/analytics.readonly"]

# Search for valid credentials

if os.path.exists('token.json'):

creds = Credentials.from_authorized_user_file('token.json', scopes)

# If there are no (valid) credentials available, let the user log in.

if not creds or not creds.valid:

if creds and creds.expired and creds.refresh_token:

creds.refresh(Request())

else:

appflow = flow.InstalledAppFlow.from_client_secrets_file(

client_secrets,

scopes=scopes,

)

launch_browser = True

if launch_browser:

creds = appflow.run_local_server()

else:

appflow.run_console()

# Save the credentials for the next run

with open('token.json', 'w') as token:

token.write(creds.to_json())

return creds

credentials = get_credentials()

The code above will open a browser, starting the authentication flow so that you can validate the access.

Once authenticated, it stores the authorized credentials into a token.json file so that it can be used next time without the need of authenticating into the Analytics API again.

5. Make a Request to the GA4 API with Python

To make your first request to GA4 API, you will need to find your property ID inside Google Analytics, pass the credentials to the BetaAnalyticsDataClient object, create a RunReportRequest instance and run the run_report() function.

To understand the dimensions, metrics and filter names that you can use, check out the dimensions and metrics explorer. For values, check out the official documentation (data API v1beta).

from google.analytics.data import BetaAnalyticsDataClient

from google.analytics.data_v1beta.types import (

DateRange,

Dimension,

Metric,

RunReportRequest,

)

property_id = "XXXXXXX"

client = BetaAnalyticsDataClient(credentials=credentials)

request = RunReportRequest(

property=f"properties/{property_id}",

dimensions=[

Dimension(name="date"),

Dimension(name="LandingPage")

],

metrics=[Metric(name="sessions")],

date_ranges=[DateRange(start_date="2024-01-01", end_date="today")],

)

response = client.run_report(request)

print("Report result:")

for row in response.rows:

print(row.dimension_values[0].value, row.metric_values[0].value)

The above should return the report from the Google Analytics Data API.

How to Use GA4 Data with a Pandas DataFrame

import pandas as pd

def response_to_df(response):

columns = []

rows = []

for col in response.dimension_headers:

columns.append(col.name)

for col in response.metric_headers:

columns.append(col.name)

for row_data in response.rows:

row = []

for val in row_data.dimension_values:

row.append(val.value)

for val in row_data.metric_values:

row.append(val.value)

rows.append(row)

return pd.DataFrame(rows, columns=columns)

df = response_to_df(response)

If you want more Google Analytics Data API Python Samples to play around with, check out the official Google Analytics Github Repository.

Now, that we have learn how to extract data with Analytics Data API with Python, you may want to explore additional python SEO tutorials or expand to extracting data from Google Search Console API, Reddit API or play around with other data APIs such as the Wikipedia API. Also Check out Joseba Ruiz great post on forecasting with the GA4 API.

SEO Strategist at Tripadvisor, ex- Seek (Melbourne, Australia). Specialized in technical SEO. Writer in Python, Information Retrieval, SEO and machine learning. Guest author at SearchEngineJournal, SearchEngineLand and OnCrawl.