How to identify and fix technical SEO issues to improve your organic traffic.

Forget technical SEO templates. When it comes to technical audits, there is no fit-for-all solution. To make good tech SEO recommendations, you need to tailor your audit to the realities of your client.

This post will give you a good checklist of things you should look out when auditing a website.

Who is This Article For?

This article is designed for the beginner to an intermediate SEO consultant, or any advanced Marketing person looking to broaden their understanding of Technical SEO.

Technical SEO Audit Checklist

- Check for Https issues

- Change Internal Links to Absolute URLs

- Internal 404 pages

- Custom 404 page

- Fix server errors (5XX)

- Block Internal Search Results

- Check for Canonical tag inconsistencies

- Canonicalize mobile version

- Implement Hreflang properly

- Check if Paginated Series Are Properly Implemented

- Add structured data

- Check for PageSpeed

- Remove non-relevant non-www

- Fix 302s

- Fix Redirect Chains and Redirect Loops

- Broken Link reclamation (links pointing to old urls)

- Split XML Sitemaps

Content SEO Audit Checklist

- Add basic meta (Title, meta description, H1, permalinks, Remove meta keywords tags)

- Fix Duplicate Content

- Fix Duplicate Meta Tags

- Remove meta keywords tags

- Resolve Thin Content ( Add at least 300 words in all pages)

- Image optimization (alt tags, image names)

- Prioritize Actions With SEMRush’s Competition Map

Check for HTTPs Issues

To help you audit your website for HTTP to HTTPs migration issues, there are plenty of tools that you could use to find out mixed content issues, pages that are still using HTTP and duplicate content.

Find Indexed HTTP Pages

One quick hack to find pages that are still indexed in HTTP is to use advanced search operators in Google and perform a site search.

site:example.com -inurl:https

If you find many pages in Google using this advanced query, that is a sign that you probably have links to the non-https version of the page or that the page hasn’t been crawled since you migrated from HTTP to HTTPs.

To find out if the issue is the latter, just copy the URL and add cache: before.

cache:example.com

You’ll get a message from Google telling you when the page was last seen.

If the date that is shown is older then your migration, you only have to wait that Google comes by and crawl that page again.

Quick Fix: To fix the issue you should redirect HTTP to HTTPs in your server File. Check for your .htaccess file.

Find Out If You Are Using The Right

TLS stands for Transport Layer Security (TLS). Like the Secure Socket Layers (SSL), the TLS is a cryptographic protocol that provides authentication and data encryption between servers. If your website has a SSL or TLS certificate, the URL will start with “https://” instead of “http://”. This will ensure secure communication between servers.

But wait,

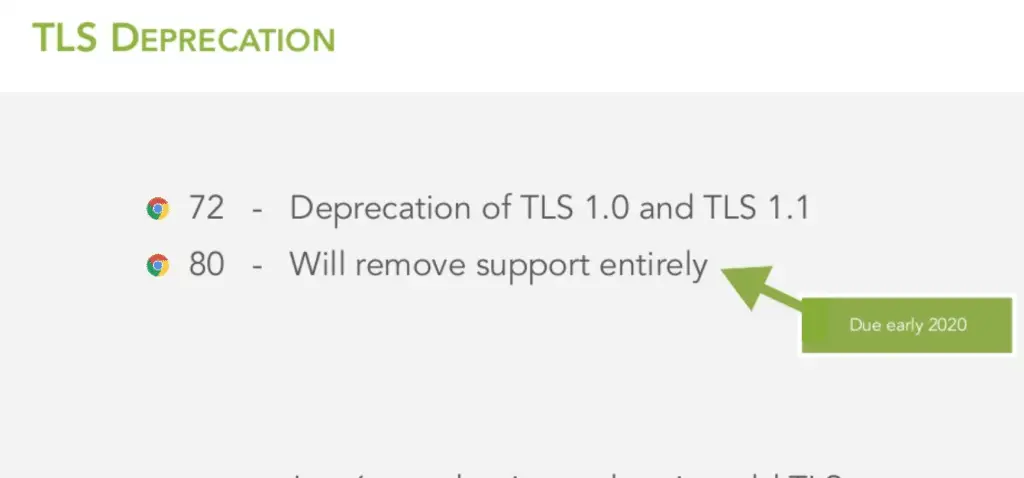

Some versions of TLS are now deprecated in Chrome 72 and others will be in the next Chrome 80 version.

- Chrome 72 has deprecated TLS 1.0 and TLS 1.1

- Chrome 80 Will remove support entirely

According to Tom Anthony, soon websites that use only old TLS will be inaccessible to Googlebot.

To make sure that your website stays crawlable. You need to check your servers support the latest version of TLS.

Quick Fix: Head over to cdn77 and test if your server support the latest TLS and SSL. TLS 1.2 and 1.3 must be enabled.

Change Internal Links to Absolute URLs

When making a proper technical SEO audit, you should look at your internal links and see if they are relative or absolute.

Relative URLs: /my-url

Absolute URLs: https://www.example.com/my-url

Some people prefer to use relative URLs for internal links since it is easier to manage in site migration. However, you can have both versions of the same URL indexed if you are not careful.

Quick Fix: You can mitigate the risk of Google picking up a mix of HTTP and HTTPs pages by using absolute URLs. Make sure you use them everywhere.

If you look in the code at all the internal links that are pointing to other pages within the website, you will discover what kind of URLs you are using.

404s

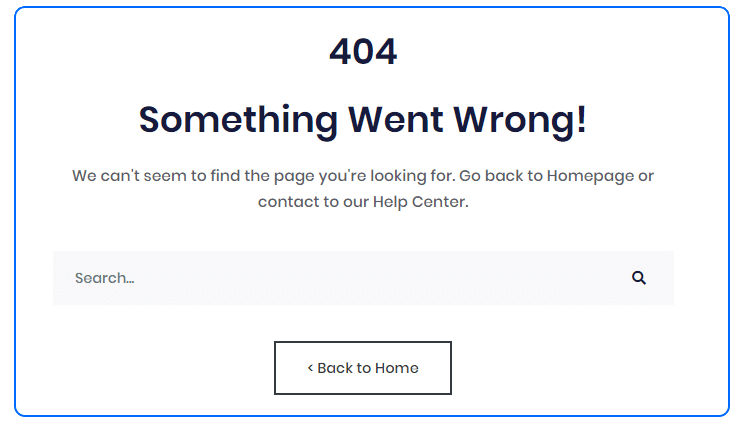

The 404 page is the page a user sees when they try to reach a page that doesn’t exist on your website.

Fix Internal 404 Errors

To find out which internal links are broken you can either use SEMRush’s Site Audit Tool or Screaming Frog’s Broken Link Checker. What you want to do is to make sure that no internal links send an internal 404 error.

Create a Custom 404 Page

If you want, you could leave the original server generated 404 page. However, it will not be attractive, nor branded. You can make this page better and make sure that people stay on your website longer.

A great custom 404 page will help people find the information they’re looking for and will give you the opportunity to show relevant content to your users whenever they reach a broken link or a deleted page.

Fix server errors (5XX)

Go to Google Search Console’s index coverage report and make sure that all server errors are fixed (5XX). Make sure that this was a one-time issue.

This is a serious issue. It impacts badly the user experience, but it is also a strong signal to Googlebot that your servers don’t have the capacity to fulfill the crawl request.

When Google gets 5xx error on a site, it can lower a page’s ranking or drop it from the index .

Make sure that this was a one-time issue.

If not.

You might have corrupt or missing files. You might also need extra server capacity.

Plugins can also be the source of corruption on your web Site.

Noindex Internal Search Results

Leaving search results indexable can create multiple issues such as thin content, duplicate content, waste of Crawl Budget and even potentially very harmful spamming technique.

How to Fix Crawl Budget and Duplicates in Internal Search?

Search Results tend to create duplicate content, especially the sorting and filtering options.

The internal search usually uses parameters or subfolders to deliver the content.

For example, if I search for shoes, I will end up on a URL like one of these:

example.com/search/shoes

example.com/s?=shoes

But here is the deal.

If I search for shoes and sort by color, I will end up with the same content, distributed in a different way.

example.com/search/shoes?sort=color

example.com/s?=shoes&sort=color

If you have many sorting and filtering options, with many variations in each, you could end up with Billions of possible URL variations.

To help you with navigation and faceted SEO on a large site, you can watch the great post by Serge Stefoglo on Moz.

How to Make Sure No-One Spam Your Search Results?

Let’s see why not blocking and noindexing your /search/ subfolder makes it really easy to make harmful negative SEO attacks on your site.

How would someone SPAM your website?

Let’s see some steps a competitor or a malicious user could take to hurt your SEO and potentially harm your brand.

1. Create a spam website

2. Search for multiple harmful terms on your website like :

- example.com/search/porn

- example.com/search/https://www.competitorwebsite.com

- example.com/search/worst-shoes-in-the-world

3. Repeat the operation 5 million times

4. Send a massive amount of backlinks to those pages

You would end up with a lot of those pages indexed in Google, creating a massive amount of harmful duplicate content.

You definitely want to noindex the internal search results. To do this, you need to apply a meta=”noindex” tag to your search engine’s section.

Be Consistent With Canonical Tags

A canonical tag, or rel=canonical tag, is a way of telling search engines which URL is your preferred version of a page. If you want to learn more about canonicals, see the Moz guide on the subject.

Rule of thumb with canonicals:

- You want to be as clear as possible with that tag and never leave one empty.

- If you don’t know which page is your canonical, use a self-referencing canonical.

- If two pages serve the same content, choose only one to be the canonical

- Never mix a canonical tag with a noindex tag. It sends mixed signals to Google.

Canonicalize Mobile or Desktop Version

Here you can do it one side or the other, depending on your preferred version. Either way, you should tell Google to index only one of the two versions.

Also, you should make bidirectional annotations using alternate and canonical tags.

The desktop URL should contain a rel=alternate tag pointing to the mobile URL, and the mobile URL should contain a rel=canonical pointing to the desktop URL.

Example:

On Mobile

<link rel=”alternate” href=”https://m.example.com”>

On Desktop

<link rel=”canonical” href=”https://www.example.com”>

Implement Hreflang Properly

Hreflang tags help you tell Google the languages and regions aimed by specific pages. Make sure that these are properly setted-up if you have a multilingual website.

Here is a quick overview of how it should be implemented.

<link rel=”alternate” hreflang=”fr-ca” href=”http://www.example.com/fr/” />

<link rel=”alternate” hreflang=”en-ca” href=”http://www.example.com/en/” />

Be careful,

Hreflang annotations should cross-reference each other. You can see pages that don’t in Google Search Console in the International Targeting tab.

Basically, if Page A link to Page B, Page B should link to page A.

But Wait… There is More…

I want to add these hreflang annotations into your sitemap.xml to make sure that you are crystal clear with Googlebot.

Here is an awesome hreflang generator built by Aleyda Solis to help you with this task.

Rel=Next is Dead, Check if Paginated Series Are Properly Implemented

The announcement by John Mueller that rel=next and rel=prev created commotion in the SEO World.

To make sure that your pagination is properly implemented, read the great case study by Adam Gent at DeepCrawl. With this post, you’ll have anything you need to manage your pagination SEO.

Also, make sure that you have a look at John Mueller’s official solution to properly manage pagination.

Add Structured Data

Structured data is great. It helps Google understand the type of data and the type of products or pages that you have on your website. A good example of this is Job listings in Google. These are gathered using structured data on websites that are listing Jobs.

In order to see if your website is already set-up for schema tags, you can use the Google Structured Data Testing Tool. It is free and you just need to add in a URL to see if your Structured Data is properly set-up.

Make sure that you look out to have at least the basic Organization and Breadcrumb schema data. You could go further by adding other Structured Data such as JobPosting, Reviews, Product or VideoObject.

Some structured data like JobPosting will help trigger Rich Snippets when someone searches for a job in Google, taking a lot of real estate in the SERPs. Others, like Breadcrumbs, will help Google understand the website structure and the internal linking of the site. At last, the organization schema markup will help you trigger this knowledge panel on the right-hand side of Google search listings.

Improve PageSpeed

Page speed is one of the most important SEO aspects at the moment. You probably know this, but Google is actually putting more weight into the page speed metric now when ranking websites. Thus, it’s very important that your website loads very fast. There is an awesome tool called GT metrics to evaluate your website, but you could also use Google’s own Lighthouse to make your site audit.

As for WordPress websites, there are many plugins that can help you make your website faster such as W3 Total Cache, Bj Lazy Load and Autoptimize.

There are a lot of quick wins here like optimizing images compressing them deferring parsing of JavaScript and minifying the JavaScript and CSS files. You can also enable browser cache and make sure that you upload images to the right size and in the right formats.

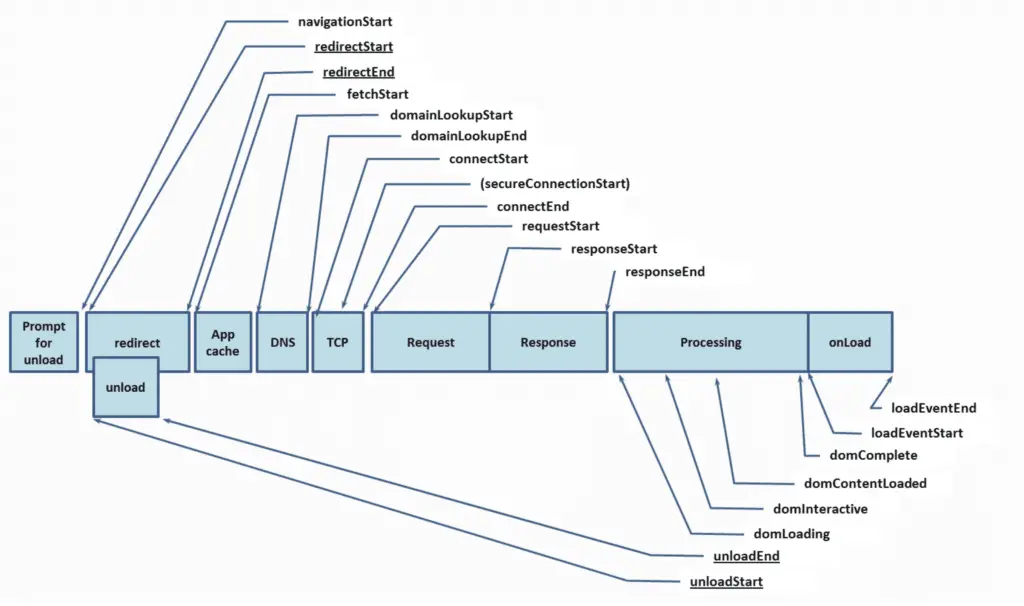

Make sure that you understand Navigation Timing and User Timing.

Remove non-relevant non-www

Over time, especially when you own a large website, you might have subdomains that you forgot about that are still indexed by Google.

To see if this is the case, you should make a site search on Google for your domain -www.

site:yoursite.com -www

This way you will find out if you have subdomains that you used in the past and that you’ve forgotten to remove from Google Index.

oldcontent.example.com

Having these pages in the index just fill Google’s index with useless pages. I would highly recommend that you remove the pages from that entire subdomain. The quick way to remove this subdomain is to verify it in Google search console and then use the URL removal tool manually remove the entire website. If you still have access to the code apply the noindex meta robots directive code in the header. The URLs should be removed very quickly.

Fix 302s

301 redirects are permanent and 302 redirects are temporary. The best practice is to always use 301 redirects when permanently redirecting a page.

Check For Redirect Chains or Redirect Loops

If you work on a very large site, this is going to be a very important step of your audit. Smaller websites will be less impacted by redirect chains.

Here is what you want to look for:

Page A redirects to Page B that redirects to Page C that canonical back to Page A.

Page A > 301 > Page B > 302 > Page C > Canonical > Page A

It might seem like a small problem on a small scale, but when you have a million web pages that implement this improperly, you end up with millions of redirections that can 1. put a load on your server and 2. convince Googlebot not to follow your redirects.

Quick Fix: Remove the redirection chain by redirecting the first page to that last page of the chain.

Take a look at Screaming Frog‘s guide to help you identify your redirect chains.

SEO Fundamentals (Title Tags, Meta Descriptions, H1s…)

If you are not sure about what I am talking about here you should read the On-Site SEO guide of Moz. This will help you understand the basics that your website should cover in terms of SEO. I will not cover those basics here. I just want to bring up two little things about titles, meta descriptions, and other basics.

Fix Duplicate Content

It is very easy to have duplicate content, especially when you have a large website. Builtvisible has a great guide to identify and fix duplicate content issues.

Here are a few other tips.

Fix Duplicate Meta Tags

Find duplicates by crawling your website with Screaming Frog. It is very easy to remove these duplicates by having dynamic meta tags.

Here is an example of unique dynamically generated meta tags.

Best {Product Name}-{Product Category} in {City} ({# of products} colors available) – {YourWebsite}

Shop {# of product}{product category}on {your website} and get the best prices in {city}. {temporary promotion} off available until {date}. Redeem your coupon quick. Posted on {date}.

Example of Dynamic Meta Tags

This will give you something like this.

Best Nike Shoes in Toronto, ON (29 colors available) – Amazon.com

Shop 126 Nike Shoes on Amazon.com and get the best prices in Toronto. 30% off available until May 30th. Redeem your coupon quick. Posted on May 15th.

Example of Dynamic Meta Tags

As you can see, you now have 100% unique meta tags and can forget about duplicates.

Remove meta keywords tags

Meta Keywords Tags have been found to have no use whatsoever in terms of SEO and they give your competitors insights on the keywords you are targetting. You should remove those.

Resolve Thin Content

Many times, websites such as e-Commerce website have many pages that we could consider as thin content, or even duplicate content. When you are doing your audit, you might want to look out for those. You could use a tool like SiteLiner to see how many pages could be considered as duplicate content and Screaming Frog to see how many pages have less than 500 words.

Small tip,

On e-Commerce websites, whenever you have a category page that you want to rank, add a 150 words paragraph describing the page.

Don’t leave before you read about the next part.

Image optimization (alt tags, image names)

This definitely falls into the basic and simple technical SEO recommendations. This post purpose is not to debate whether or not alt tags help SEO or not.

This is simply a best practice and quick fix that you should look into when analyzing a website.

Quick Fix: Just extract a list of images without alt tags with your favorite tool and send it to your dev team.

Prioritize Actions With SEMRush’s Competition Map

This step is one of the most insightful analysis you can do when auditing a website for the first time.

What is your position compared to your competitors and what can you do about it?

I have taken the strategy after seeing Stephanie Brigg’s presentation at MozCon 2018.

I strongly recommend that you read that presentation and learn from it.

Brand Hijacking

Brand hijacking is one of the most successful SEO strategies you can do. Brands have a massive amount of search volume and ranking on those brand terms really could get you a large amount of traffic.

What is Brand Jacking?

Brand jacking essentially is creating brand landing pages to try to rank for other brands keywords.

I am NOT talking about hacking a website for their ranking, but simply to rank for brand keywords because you have these brand-related content on your own website.

Industries where we often see that is on e-commerce websites, social medias and Job Boards because the leaders in these industries usually have a massive amount of backlinks which help them rank over brands on their own branded keywords.

However, brand hijacking is hard, so each brand pages should be 100% optimized. You should have textual content, a brand logo named with the brand name and a relevant Alt tag. You should also have a link pointing to the brand landing page from other parts of the website (e.g. the product page).

Tools Needed To Audit a Website

To make a good audit there are free an paid tools you can use.

What do you absolutely need?

Google Analytics and Google Search Console. Without those, you will not be able to make a good tailored recommendation.

Sometimes, you might want to make an audit to prospect for new clients. In that case, you will need other tools to give you insights into their site’s health. Here’s a quick list of tools that can help you out:

- Google Analytics, Google Search Console, Bing Webmaster Tools (Analytics Data)

- Screaming Frog, SEO Powersuite, OnCrawl, DeepCrawl (Crawling)

- SEMRush, Ahrefs, Moz (Competitor and Traffic analysis)

- Lighthouse, GTMetrix (PageSpeed)

Summary

Woah! That was a big one.

Just a few other best practices you should follow to avoid your site losing traffic when implementing technical SEO recommendations:

- Crawl the Website Regularly For Issues

- Always launch in a dev environment before making your changes live.

- Make sure that you properly map your best-performing assets: high-traffic keywords and pages, pages with most backlinks, etc.

- Always have a quick roll-back plan before launching any big changes.

- Plan which KPI that you are going to track to evaluate the success of your changes.

- Make your changes in smaller subsets. If you launch everything at the same time, you will not be able to track what works and what doesn’t.

This is it! Good luck making your own technical SEO audit.

Useful Resources and Good Reads

- https://www.domwoodman.com/posts/how-to-make-your-awful-technical-seo-audit-better/

- https://builtvisible.com/duplicate-content-detection/

SEO Strategist at Tripadvisor, ex- Seek (Melbourne, Australia). Specialized in technical SEO. Writer in Python, Information Retrieval, SEO and machine learning. Guest author at SearchEngineJournal, SearchEngineLand and OnCrawl.