Welcome to this Google Search Console API tutorial with Python.

In this tutorial, I will show you how to extract all your Google Search Console data into CSV files, using the GSC API and Python.

If you want to get the code I used in this video, head over to Github.

Build Great SEO Reports

One of the great use cases for using this API in Python for SEO is to build SEO reports like the one below. I’ll show you how in the next video tutorial. For now. Let’s extract data.

Why use the Google Search Console API?

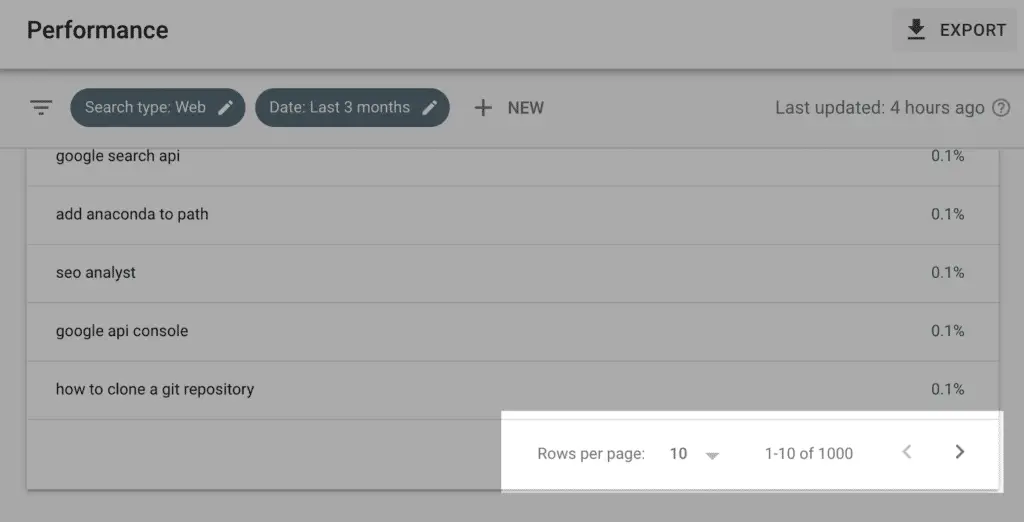

The reason I am using the Google search console API is that the GSC User Interface is quite limited for advanced reporting.

It shows only 1000 rows at the time and you can’t extract keywords per page at scale.

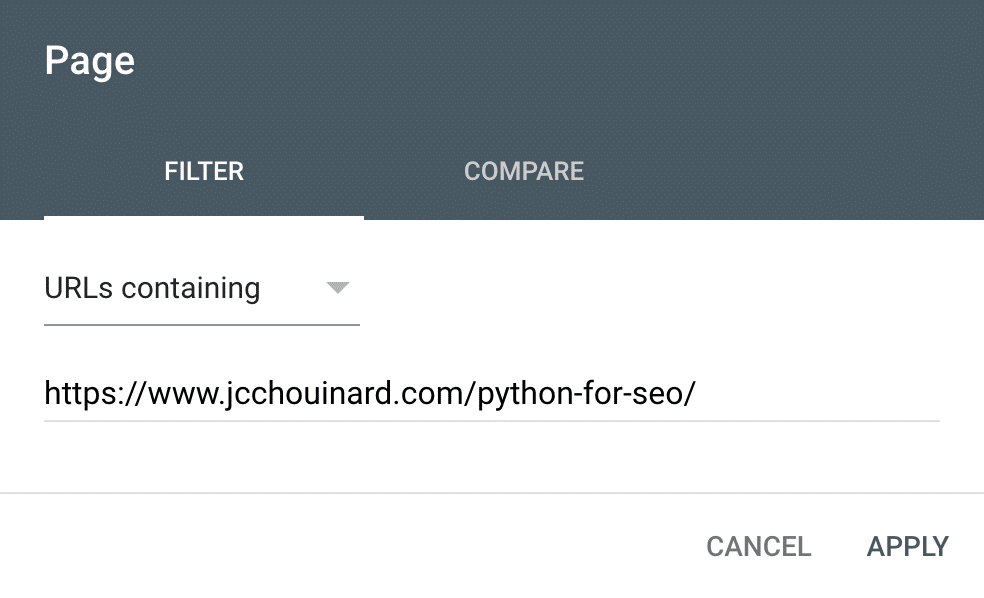

In Google Search Console, you can look at the page and check the keywords for that specific page. But, checking each page manually is a painful process and provides limited insight.

The search console API provides a way around that, by letting you extract all of the data, helping you build – advanced SEO reports – using Python.

Get Started With the GSC API

Before we get to the video. Let’s look at what we need to do first.

To be able to access the API, you will need to do a few things first.

1. Clone the Repository

First, you need to clone the code from my Github account.

Clone the repository using git clone in your Terminal:

$ git clone https://github.com/jcchouinard/GoogleSearchConsole-Tutorial.git

And select the location to clone the repository.

2. Install the Requirements

Move into the directory using cd in the terminal.

$ cd path/to/GoogleSearchConsole-Tutorial

Then, install requirements.txt.

$ pip install -r requirements.txt

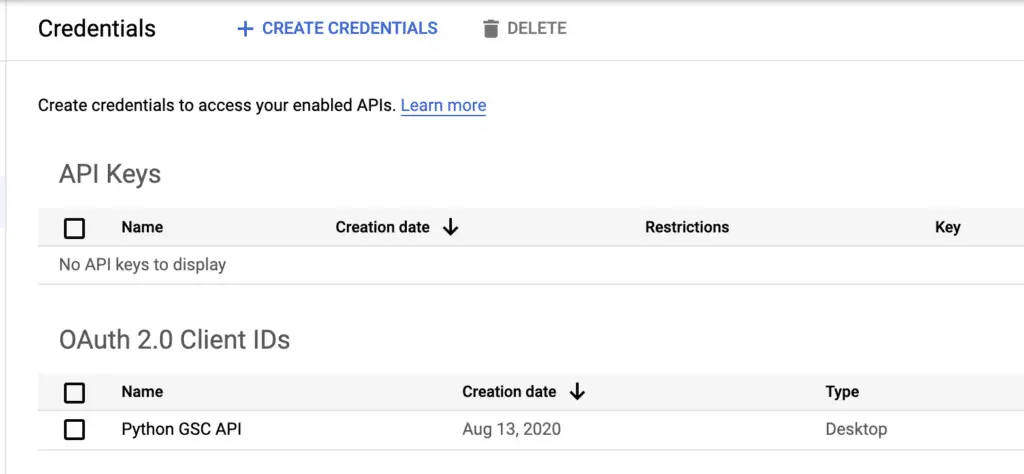

3. Get Your Client Secrets File

You need to get a client secrets key and store it in a JSON file. If you don’t know how don’t worry, just check my tutorial on how to get the Google Search Console API keys.

Beginning of the Video Tutorial on GSC API

Define Basic Variables

You need to define the website and the dates that you want to extract and give the CSV an output name.

creds = 'client_secrets.json'

# https://www.jcchouinard.com/google-api/

site = 'https://www.jcchouinard.com'

start_date = '2020-07-15'

end_date = '2020-07-25' # Default: 3d in past

output = 'gsc_data.csv'

Let’s run this.

Authenticate Using OAuth

To use the API, we need to authenticate GSC using OAuth. To do so, I will need to import the OAuth module, that I created to authorize my credentials and store them in the webmaster_service variable.

Now, the first time you run this, a pop-up will open asking you to login into your Gmail account and authorize the credentials, then – it will save those authorised credentials so you don’t have to log in each time.

from oauth import authorize_creds

webmasters_service = authorize_creds(creds)

Now, run the function.

Awesome, it works!

Extract GSC Data for a List of URLs

The first function that we are going to look at is how to extract GSC information from a list of URLs. This is going to be useful if you decide to crawl your website and get a list of thousands of URLs and you want to know if they are getting traffic or not.

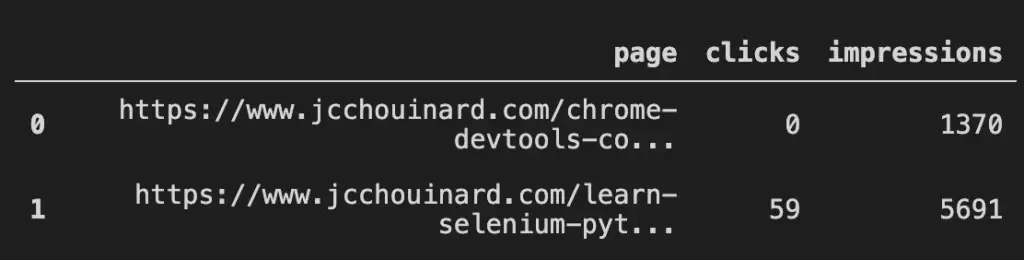

Let’s take those two URLs for example.

from gsc_by_url import gsc_by_url

list_of_urls = [

'/chrome-devtools-commands-for-seo/',

'/learn-selenium-python-seo-automation/'

]

Here I use a list comprehension to append the domain to the URI.

list_of_urls = [site + x for x in list_of_urls]

And then provide the arguments necessary to run the gsc_by_url function.

args = webmasters_service,site,list_of_urls,creds,start_date,end_date

Let’s run it and see what we can get.

gsc_by_url(*args)

The result is a simple DataFrame with the clicks and the impressions for those pages.

It is very simple, but expand that to tens of thousands of pages and the data can be massively useful to prioritise which URLs have actual value.

Extract GSC data Using Filters

The second function is to extract data by filtering specific elements.

For example, I want to extract only the queries that contains Python.

from gsc_with_filters import gsc_with_filters

# Filters

dimension = 'query'

operator = 'contains'

expression = 'python'

Again, providing the arguments of the function containing the credentials, filters, and the dates, I can now run gsc_with_filters.

args = webmasters_service,site,creds,dimension,operator,expression,start_date,end_date

gsc_with_filters(*args,rowLimit=50000)

The result is a DataFrame that contains all pages that ranked for queries that contains the word “Python”.

By default, this function will return a 1000 rows, but you can add the argument rowLimit to define up to 25K rows.

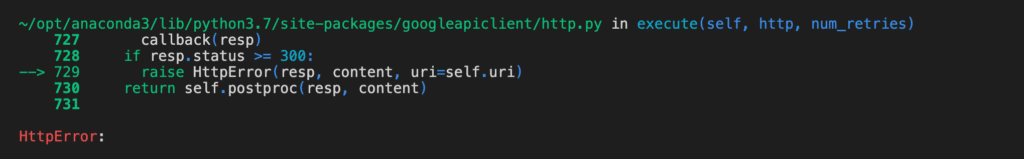

If we set the rowLimit to be over 25K, we will get an HTTPError. That’s because we tried to add a limit that is over what the GSC API allows us to make in a single request.

To extract more than 25,000 rows, we will need to create loops using a combination of the startRow and rowLimit arguments in order to make multiple HTTP requests of 25K rows.

Let’s look at how to do that.

Extract All Your Google Search Console Data

This next function is one is my favourite. With this one, we will extract 100% of the data from Google Search Console.

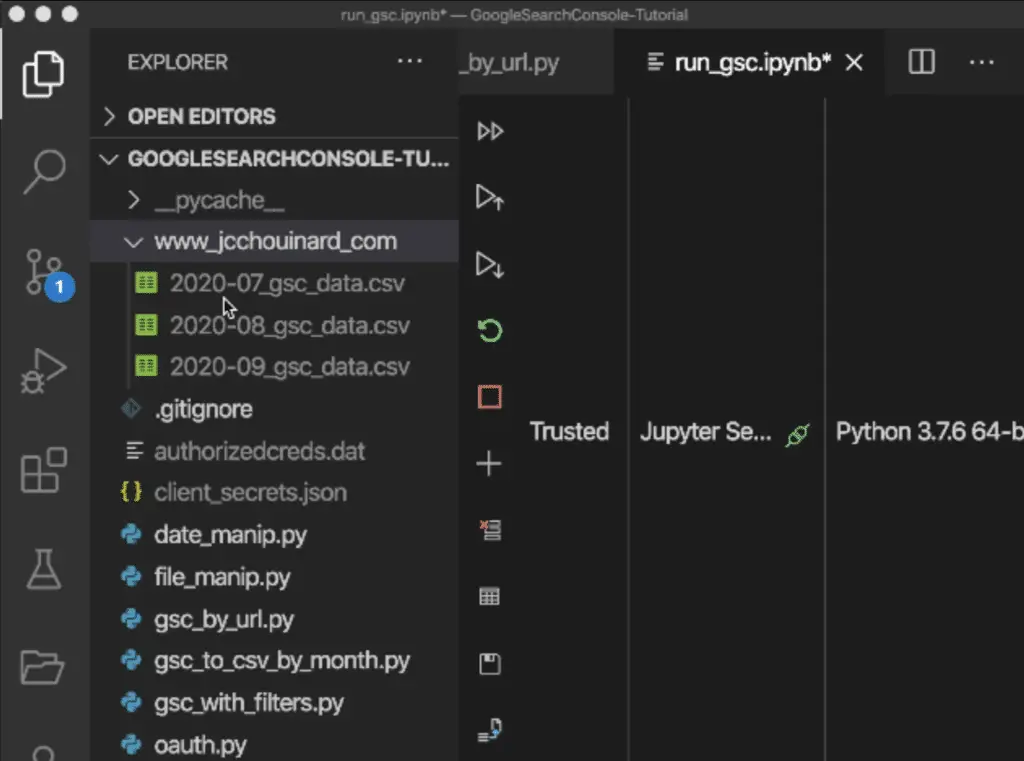

What the script does is that it creates an output folder with the name of your website and saves your GSC data in CSVs – aggregated by months.

You can also look how to extract all your GSC data with a simple script.

If some CSVs already exist from previous extractions, it will check the dates in those CSVs to make sure that existing dates are not extracted again.

The dates that have not been extracted yet, will be processed and appended to the right CSV file.

Let’s run the gsc_to_csv function to see more details of how the data is extracted.

from gsc_to_csv_by_month import gsc_to_csv

args = webmasters_service,site,output,creds,start_date

gsc_to_csv(*args,end_date=end_date)

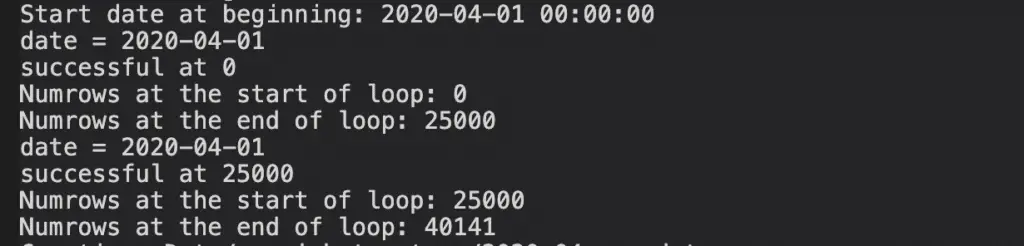

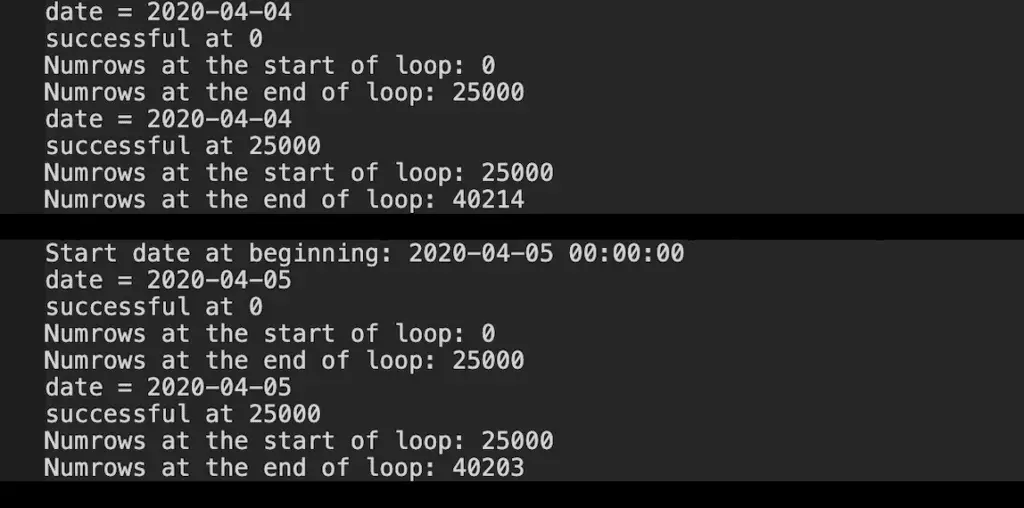

What it does is that it loops one day at the time. Then, for each of those days, it makes the first request to extract the first 25K rows of data. It appends those rows to a DataFrame. Then – for that same date – it makes another request to get the rows from 25k to 50k, then from 50k to 75k, until all the rows are extracted for that specific date.

Once that day is completed, it writes the data to a new, or existing CSV file, depending on the situation. Once this is done, it moves to the next date and repeats the process.

That process allows us not to lose any progress made, and never to extract the same date twice.

Here, since my website is small, it wouldn’t need to get data by batch since I only have around 4000 rows to extract per day, but you can still see how this works.

gsc_to_csv() Function’s Arguments

Note that the end_date argument is optional. By default, this function will extract up to three days in the past as this is the limit of data that is reported in Google Search Console.

Right now, let’s leave the end_date empty to see what happens.

from gsc_to_csv_by_month import gsc_to_csv

args = webmasters_service,site,output,creds,start_date

gsc_to_csv(*args)

The gsc_to_csv function check the dates in the CSVs and tells you when a date was already extracted printing Existing Date: YYYY-MM-DD Timestamp. When it discovers a new date, it processes it and appends the new dates to the existing CSV.

Note that it has also created a new CSV file for the month that was never processed.

Cool, right?

Let’s look at another optional argument, the gz argument.

from gsc_to_csv_by_month import gsc_to_csv

start_date = '2020-07-15'

end_date = '2020-07-25'

args = webmasters_service,site,output,creds,start_date

gsc_to_csv(*args,end_date=end_date,gz=True)

The gz argument lets you save the data to a compressed .gz file in order to save some space on your hard-drive.

This is especially important because, with the GSC API, you can quickly get a large amount of data, even for a relatively small website. If you set the argument to True and run this, you’ll re-extract the data, this time to a compressed CSV file.

Isn’t this awesome?

Automate Your Python Script

To automate the Google Search Console API queries, schedule your python script on Windows task scheduler, or automate python script using CRON on Mac.

Google Search Console Data Will Be Sampled

When you extract data by query, Google Search Console reports only sampled data.

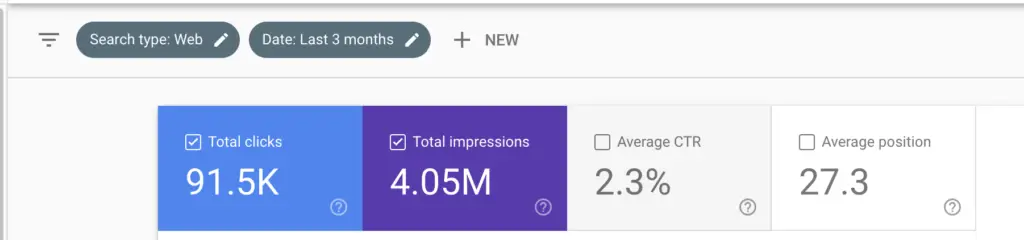

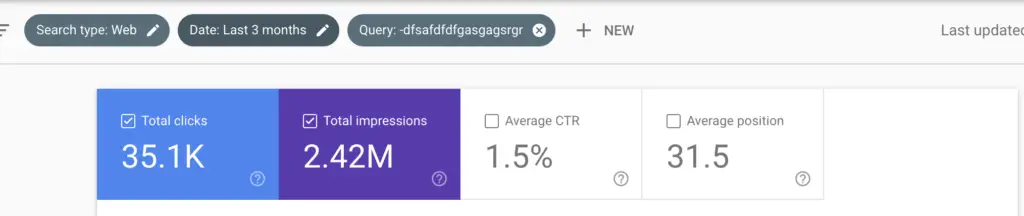

For example here, in the last 3 months, the number of clicks is 91.5K. If I exclude a non-existing query, I get only 35.1K clicks.

That’s how sampled the end data will be.

Conclusion

I hope you enjoyed this overview of the python functions that I created to help you guys extract all your Google Search Console data using the API.

If you have any questions, please let me know in the comments below and don’t forget to like the video and subscribe to my channel to stay informed when I create new tutorials.

In the next video, we will look at some SEO reports you can actually create using that data.

SEO Strategist at Tripadvisor, ex- Seek (Melbourne, Australia). Specialized in technical SEO. Writer in Python, Information Retrieval, SEO and machine learning. Guest author at SearchEngineJournal, SearchEngineLand and OnCrawl.