In this post, I will cover some of what I have learned reading the Google patent titled “Selection of an Image or Images Most Representative of a Set of Images” by Shumeet Baluja and Yushi Jing.

What is the Patent About?

This patent talks about how Google may estimate the quality of an image based on how similar it is to the other images in the search result.

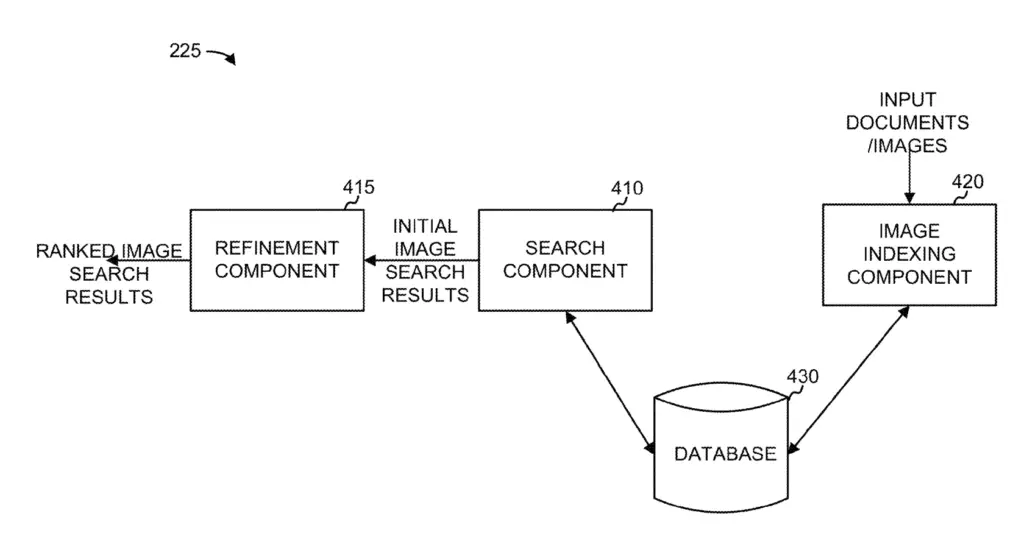

The patent is mostly about the “refinement component” which extends initial image search results to make them more relevant.

The example mentioned is that of the query “Eiffel Tower”. How can Google make sure not to show images with the Eiffel tower far in the background, or even where you can only see a portion of the tower, both of which have low relevancy to the query?

This patent explains how they could achieve that in 3 ways:

- Selecting the best ranked image based on a determined feature

- Using a comparison function with ranking to identify the best representative of the set

- Comparing descriptive text and features of images to identify relevancy to the query

Highlights From The Most Representative Image Patent

- The rank refinement happens after an initial text-based ranking was made by the search component

- Important images features are dependant to the query

- Depending on the query, some feature may impact more ranking

- Uses the SIFT (Scale-In variant Feature Transform) algorithm to identify objects of interest

- Faces and objects of interest (e.g. landmarks, …) are important element that can trigger inclusion or exclusion from search results.

- Focus, color distribution, contrast are all elements that can influence the score of an image

- The refinement component can be used to select the best image to display next to a product or a news article. The highest ranked would be chosen.

Basics of Image Search Engine

Here is the general process of how the Image Search Engine works at Google:

To begin, a user send a query to the image search engine.

Then, Google compares the query to the terms associated with each image (e.g. text from the filename, the alt attribute, caption, text within a specified number of pixels of the image …)

After, it returns response relevant to the image query based on text. Some image may not be relevant.

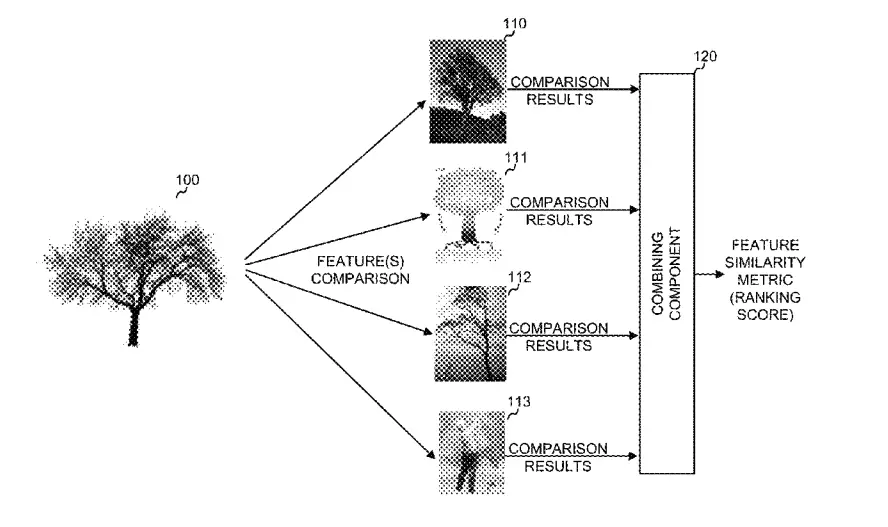

Moreover, Google generates the combined feature similarity metric (also called ranking score). The similarity metric represents an image feature comparison of each images against the others.

Next, images are ranked based on feature similarity metrics. The images with the highest similarity ranks would be considered most representative images.

Finally, images are presented to the user in order of the similarity ranks from highest to lowest.

Low ranking images may be removed from search results.

Understand the Image Search Engine Core Components

At its core, the Image Search Engine includes:

- Image Indexing Component

- Search Component

- Database

- Refinement Component

How the Refinement Component Works

Let’s have a deeper dive into the refinement component: what it is and what it does.

Google’s refinement component is refining initial image search results that were returned by the search component comparing a query to text associated with images.

The initial ranking can include image that are not very relevant.

For instance, for the query “Eiffel Tower” it could return images of people taking a selfie in the park near the Eiffel tower… simply because the image was named “eiffel-tower.jpg” and the web page was saying that it was a “nice walk at the Eiffel tower”.

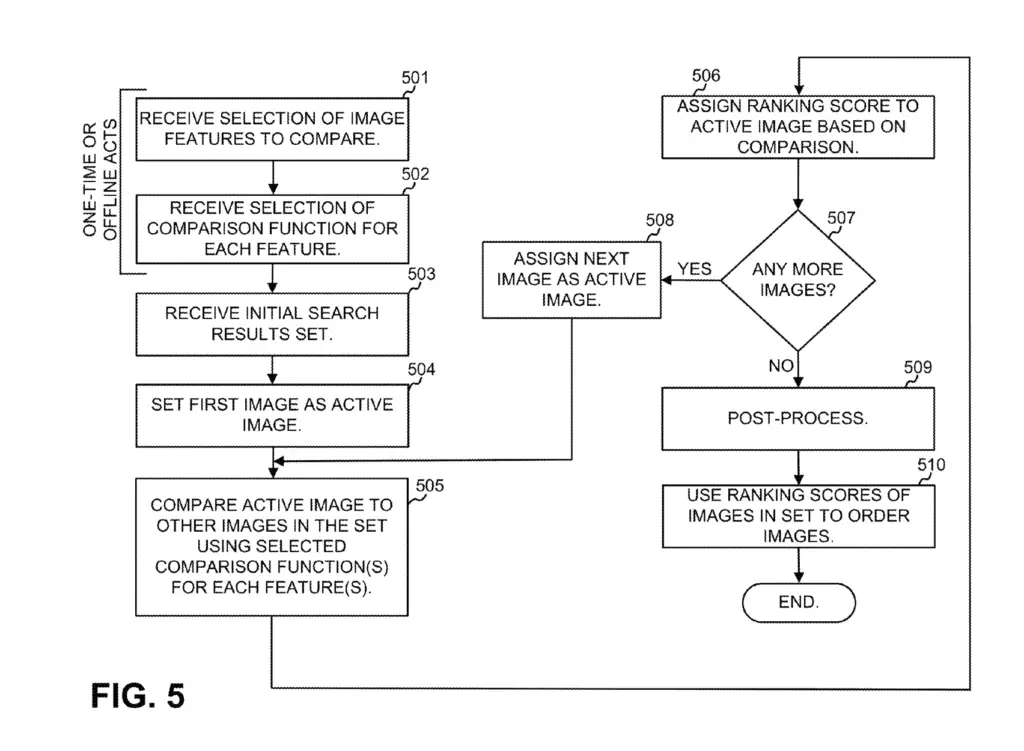

- The refinement component receives top N images from search component

- Each image received has features assigned to it

- The features of images are converted to numerical histograms for mathematical comparison

- One image after the other, each image’s feature is compared to the corresponding feature of the other images in the set

- Each time an image’s feature is very similar to another image in the set the score is increased

- The images with the highest scores are re-ranked as higher quality

- Some feature that are very similar across images can have more weight in the ranking

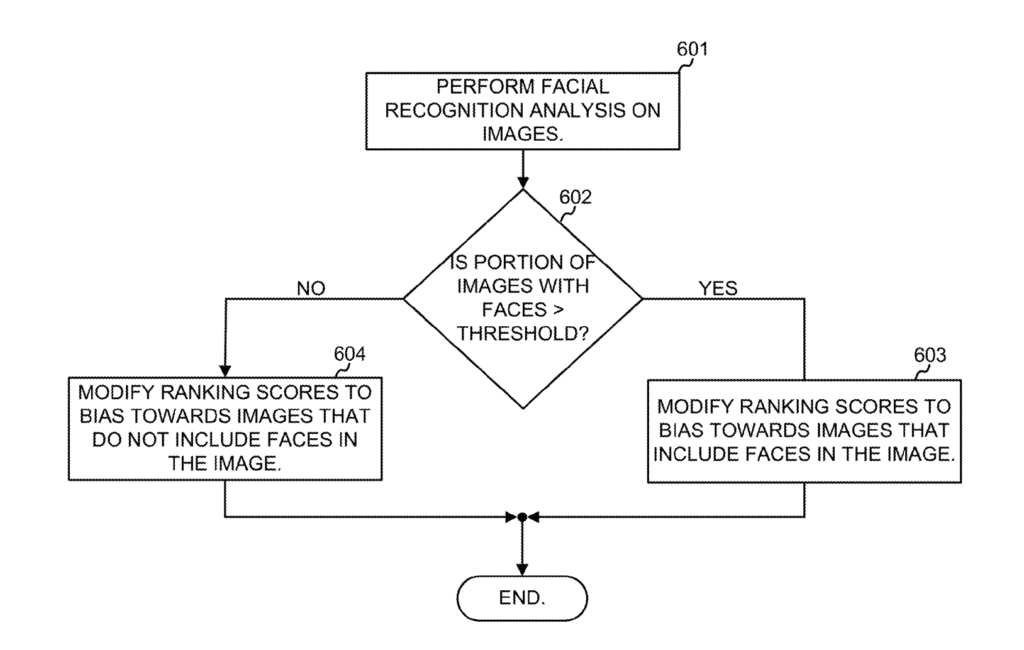

- Use facial recognition on all images to determine if query is likely to be searching for a person

- Check for object of interests (e.g. Eiffel tower) and improve ranking based on where they are in the image.

- Evaluate the quality of the images by looking at focus, color distribution, contrast, etc.

How Google Assigns Image Features

From multiple patents, I have listed the features that can be assigned to images by the Image Features Module. The best patent to read if you are interested in how image features are generated is the patent named “Ranking of images and image labels“.

- Color

- Brightness

- Texture

- Shape

- Edges

- Wavelet based techniques

- Intensity

How are Features Compared?

Google compares features by dividing images in small patches (e.g. rectangles or circles).

For each patch an histogram is computed (color, intensity, edge, texture, …).

Each histogram becomes a feature of the image.

The refinement component then compare differences between the histograms.

The histograms make it easy to convert the features into numbers that can easily be compared mathematically.

How Image features are Weighted

Not all features weight the same depending on the query.

For the query “rose” the color may be more important, whereas for the query “table” the shape may be more important and the color less.

To understand how features are weighted we need to move to the Clustering Queries for Image Search patent.

They way the weight are assigned is by clustering queries and comparing the feature similarity of the images in each cluster. The most important features in each query clusters are then given more weight when providing search results.

How Faces are Handled

To remove bias, Google may perform facial recognition on all images for a given query in order to determine if the query is intended to find a person.

If a lot of images contain faces, Google may adjust weights to make images containing faces more prominent or vice-versa.

To learn more about how Google handles facial recognition.

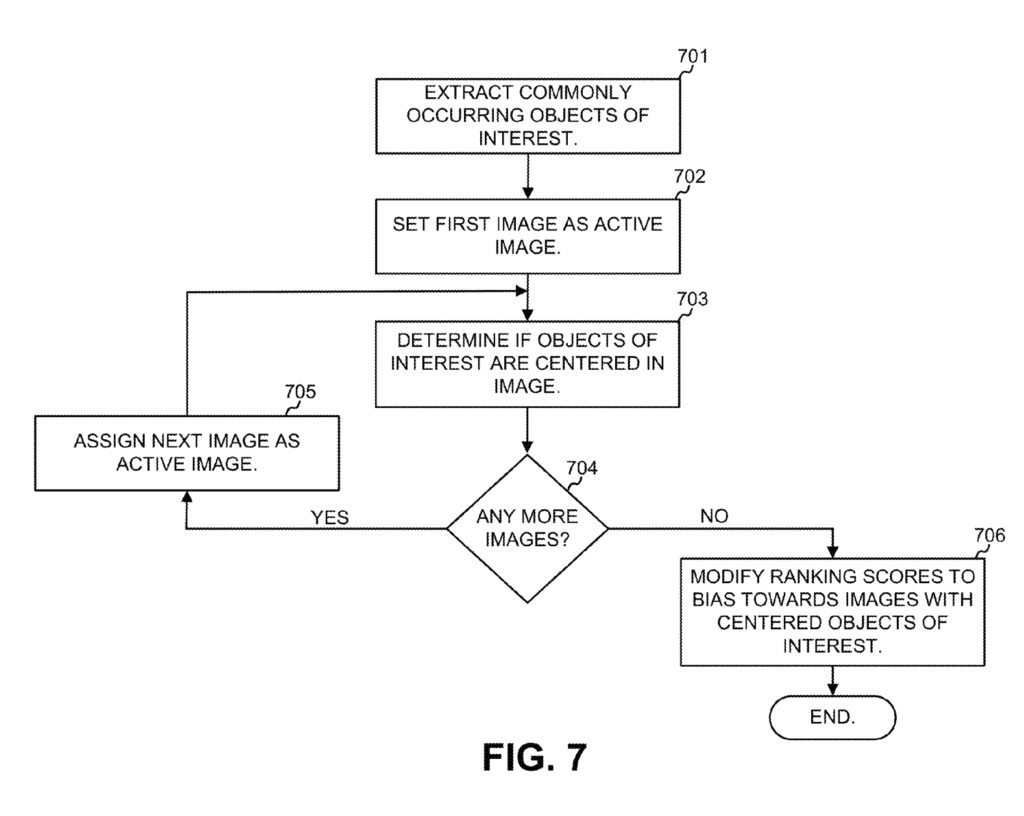

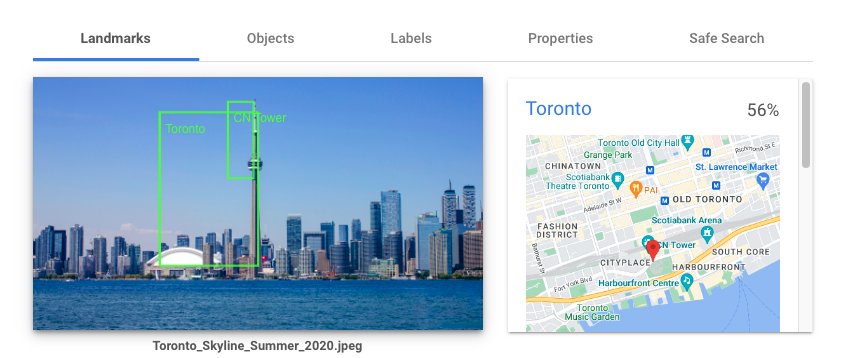

How Object of Interests are Handled

In other cases, Google may want to show object of interest such as landmarks.

A user searching for the “Eiffel tower” should be more interested in images where the landmark is centered, in primary focus and not cropped.

The module would thus search for similar objects of interests across all images and see if they are centered.

The centerness of the object can be put on a scale from 0 to 1 and be used as a weight to multiply with the feature score.

Sometimes, the center is not optimal, so an alternative target location can be defined.

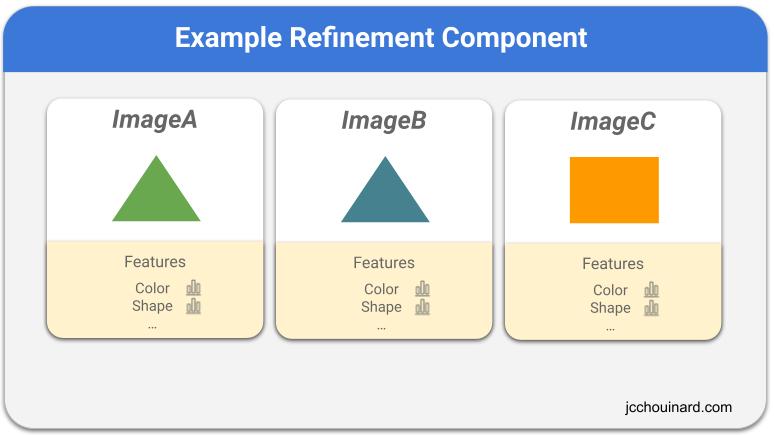

Example of refinement where all features are equal

Let’s say we have 1000 images for “Eiffel tower”.

The first image (ImageA) is compared against the second (ImageB).

The shape histogram of ImageA is compared to the shape histogram of ImageB.

The shape is very similar to the shape of ImageB (higher than a threshold).

Thus, ImageA score increases.

Then, the color of ImageA is compared to the color of ImageB. It is not exact but still above threshold. ImageA score increases again.

All features are iterated this way.

Then, ImageA compares against ImageC, where the shape and color is below the threshold and ImageA score stops to increase.

After, ImageB and ImageC are processed the same way.

In the end ImageC don’t share any similarity and will be removed from the search results.

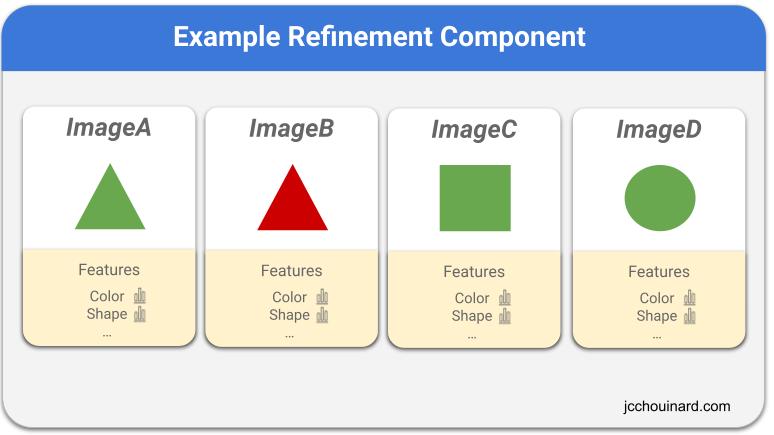

Example of refinement where some features have more weight

Now let’s take a second Image search results.

We still have different shapes, but based on the search results that we see, we can understand that the color seems to be more important than the shape.

In which case, Google would multiply the weight with the feature score to determine if the image scare will improve.

This way, the triangle in ImageB would be the one excluded from search.

What SEOs Can Do About It?

In order to rank better in image search engine, SEOs must create images that as similar as possible to the images currently ranking for a query.

Additionally, SEOs should use high-quality, in-focus, well-lit images on their site.

When sharing about landmarks, people, restaurants, products, or any well-known entity, make sure that the entity is centered in the image.

If images are showing people, add people. If images are showing objects of interest, add object of interest.

When trying to rank for an image for a given query, make sure that the image has “similar” features as what you are seeing in the search results (color, shape, objects of interest, brightness, etc.).

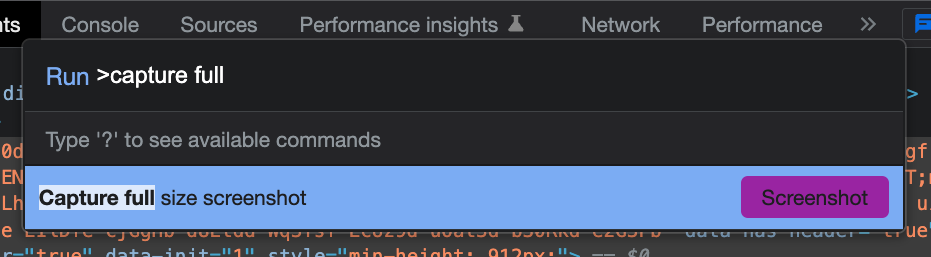

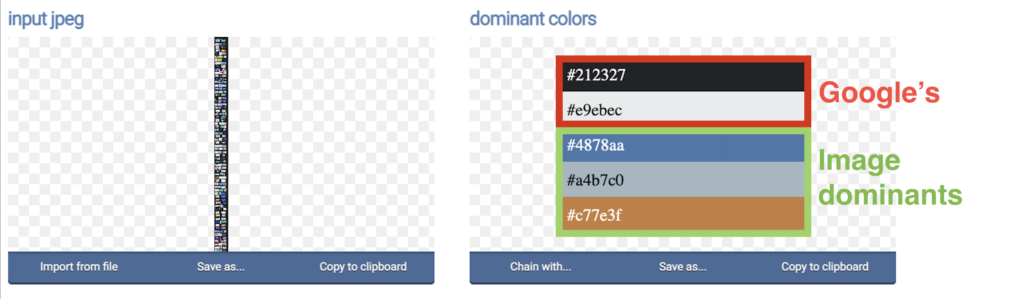

Check Dominant Colors

Open Google Image and search for a query (e.g. “SEO”)

Create a full size capture screenshot

Copy the resulting image in OnlineJpgTools.

Check Landmarks With Cloud Vision API

With tremendous resources, you could scrape first 100 images and pass them through Cloud Vision API to detect the landmarks that you need for each query.

Patent Details

| Name | Selection of an image or images most representative of a set of images |

| Assignee | Google LLC |

| Filed | 2014 |

| First filed | 2006 |

| Status | Active |

| Expiration | 2026 |

| Appl No. | 14/288,695 |

| Inventor(s) | Shumeet Baluja and Yushi Jing |

| Patent | US9268795B2 |

What Categories is the Patent About?

- Image SEO

Google Search Infrastructure Involved

The “Selection of an image or images most representative of a set of images” patent mentions these elements from the Google Search Infrastructure:

- Image Search Engine

- Refinement Component

- Search Component

- Image Indexing Component

- Combining Component

Conclusion

The “Selection of an Image or Images Most Representative of a Set of Images” patent was interesting as it provided insights into how Google use image similarity to rank images. This means that Google uses the “Wisdom of the crowd” to determine image quality. The image that has the most similar features to the most images should rank higher.

SEO Strategist at Tripadvisor, ex- Seek (Melbourne, Australia). Specialized in technical SEO. Writer in Python, Information Retrieval, SEO and machine learning. Guest author at SearchEngineJournal, SearchEngineLand and OnCrawl.